The AI ROI Reckoning: How to Spot the AI Pilot Trap Before Budgets Are Wasted

AI Value Gap Series | Part 1 of 14

How to identify weak AI pilots early, measure ROI risk, and avoid low-value AI investment.

Is Your AI Strategy a Value Driver or a Cost Center?

Introduction: The End of the “Experimentation” Era

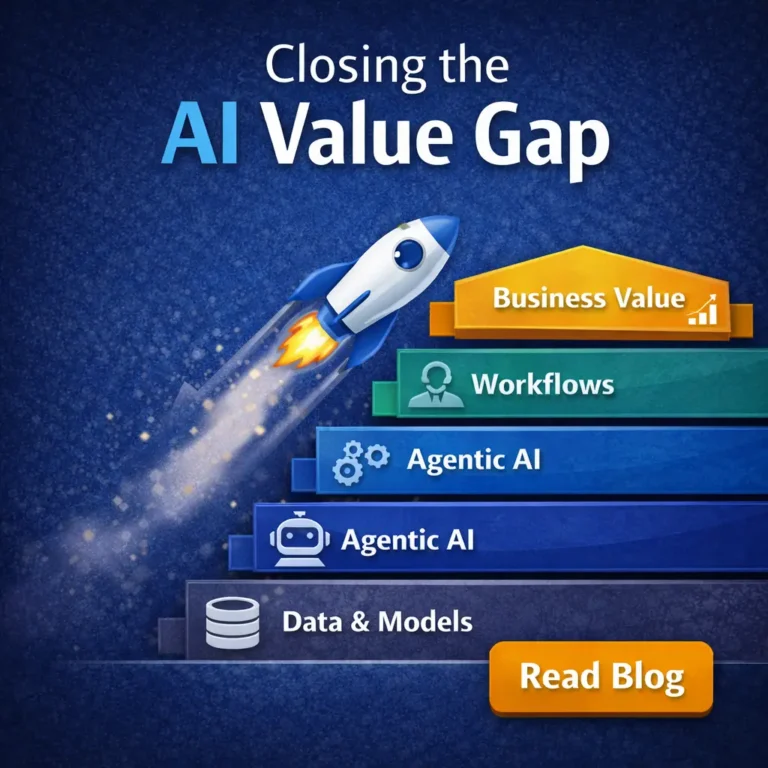

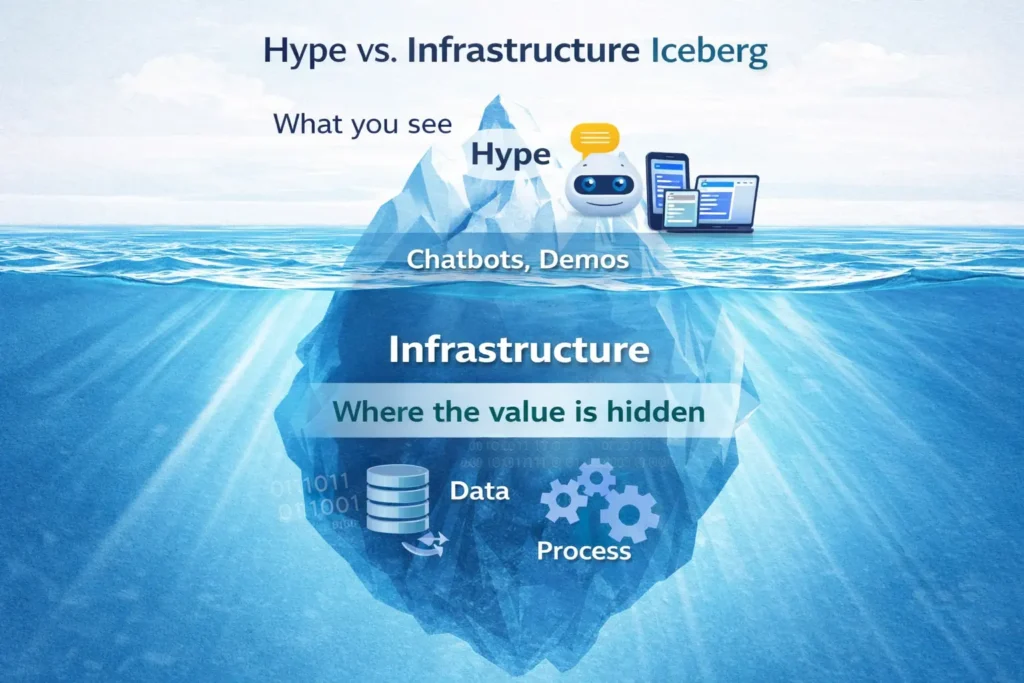

Artificial intelligence is no longer judged by how impressive it looks in a demo. In 2026, it is judged by whether it creates measurable business value. That shift has triggered the AI ROI(Return On Investment) reckoning. Companies have invested heavily in copilots, chatbots, automation assistants, and early agentic systems, yet many of these initiatives still fail to improve cost, speed, quality, or margin in a meaningful way.

At the center of this problem is the AI Pilot Trap. A pilot may generate excitement and show early promise, but if it is not tied to a real workflow bottleneck, trusted business data, and a clear KPI(Key Performance Indicator), it rarely becomes a true value engine. Instead, it becomes another experiment that consumes budget without changing the business.

This article explains why that happens, how to spot the warning signs early, and how to use a simple AI ROI Risk Assessment Tool before more money is committed. The issue is no longer whether companies are experimenting with AI, but whether those experiments can produce measurable business value. That is where many organizations begin to fall into the AI Pilot Trap.

1. What the AI Pilot Trap Really Is

The AI Pilot Trap is the pattern in which promising AI initiatives generate early enthusiasm but fail to create lasting business value. A pilot may look successful in a demo, attract internal attention, and even win short-term support. But unless it is tied to an important workflow, measured against a clear KPI, and connected to trusted business data, it rarely scales into a true value engine.

A Pilot Can Look Successful Without Creating Value

Many AI pilots appear impressive at first. They may produce faster summaries, smoother interactions, or more polished outputs than existing manual work. That early success can create the impression that the organization is making real progress.

The problem is that visible capability is not the same as business impact. A pilot can work well in a controlled setting and still fail to improve cost, cycle time, quality, throughput, or margin in a meaningful way.

Most Pilots Stay Isolated From Core Operations

A common reason AI pilots stall is that they remain isolated from the workflows that matter most. They operate as stand-alone experiments rather than as part of the company’s operating system.

When that happens, the pilot may save time for a small group of users, but it does not remove a real bottleneck or improve a high-value process. The result is local convenience without enterprise-level ROI.

Weak Measurement Makes It Hard to Prove ROI

Another problem is the lack of clear measurement. Many pilots begin without a baseline KPI, a defined business target, or a shared view of what success should mean.

Without that discipline, teams may talk about usage, engagement, or positive feedback, but they cannot prove business value. A pilot that cannot show measurable improvement is unlikely to earn the trust or budget needed for scale.

The Pilot Trap Turns Innovation Into Budget Waste

This is why the AI Pilot Trap matters. It is not simply a technical issue. It is a business problem. When organizations repeatedly fund pilots that never mature into operational value, they consume budget, management attention, and organizational trust.

In that sense, the trap is not caused by lack of AI capability alone. It is caused by the gap between experimentation and execution. And that is exactly why leaders need to detect the warning signs early, before another promising pilot becomes another expensive dead end.

2. The Real Causes of Low AI ROI

Low AI ROI rarely comes from a lack of technical potential alone. In most cases, the problem is more practical: the initiative is not connected to the workflows, systems, and measurement discipline required to create business value. When that happens, even a capable AI tool can produce weak returns.

Cause 1: AI Is Added to a Weak Process

One common problem is that AI is layered onto a workflow that is already inefficient. If the underlying process is unclear, fragmented, or poorly designed, AI often accelerates the wrong activity instead of improving the system itself.

In that situation, the company may see faster output without seeing better business performance. The result is automation without real operational gain.

Cause 2: The Use Case Is Interesting but Not Economically Important

Some AI pilots solve a visible problem, but not an important one. They may save time on a task that has little effect on cost, quality, throughput, customer experience, or margin.

This is a major reason why AI pilots fail to deliver meaningful returns. A use case can be technically impressive and still be too small, too isolated, or too low-value to justify sustained investment.

Cause 3: The Pilot Is Not Connected to Trusted Business Data

AI creates stronger value when it operates with access to reliable, timely business data. When a pilot is disconnected from core systems such as ERP, CRM, or operational databases, its impact is usually limited.

Without a clear source of truth, outputs may be harder to trust, decisions may require manual checking, and the workflow may remain only partially improved. That weakens both adoption and ROI.

Cause 4: Success Is Measured by Activity, Not Outcomes

Another frequent issue is poor measurement. Teams may report prompt volume, pilot usage, user excitement, or anecdotal success, but those signals do not prove business value.

Low AI ROI often reflects the absence of clear outcome metrics. If the organization cannot show improvement in a meaningful KPI, it cannot show that the pilot is creating measurable value.

Cause 5: Reliability Problems Quietly Erode ROI

Even when a pilot appears useful, inconsistent performance can reduce its value. If outputs vary too much, exceptions are not handled well, or employees must repeatedly verify results, the process becomes slower and less trusted than expected.

This is why reliability matters even in an ROI-focused discussion. An AI system does not create strong returns simply by working sometimes. It creates value when it performs consistently enough to support real operations.

3. Early Warning Signs of the AI Pilot Trap

The AI Pilot Trap rarely appears all at once. In most organizations, it develops through a series of small warning signs that are easy to ignore in the early stages. A pilot may still look promising on the surface, but if these signals begin to appear, the risk of weak AI ROI rises quickly.

Warning Sign 1: No Clear Baseline KPI Exists

A pilot cannot prove value if there is no clear measure of the current state. When teams begin without a baseline KPI, they may later claim improvement, but they have no disciplined way to show what actually changed.

This is one of the most common early mistakes. Without a baseline, ROI becomes a story rather than a measurable result.

Warning Sign 2: The Pilot Solves a Local Task, Not a Real Bottleneck

Some pilots improve a narrow task without changing the broader workflow. They may save a few minutes for a small group of users, but they do not remove a real source of delay, cost, or friction inside the business.

That kind of improvement may still look useful, but it rarely produces meaningful return. Enterprise value comes from solving bottlenecks, not just polishing isolated tasks.

Warning Sign 3: Human Rechecking Still Dominates the Process

A pilot may appear productive until people begin using it in real work. If employees must constantly verify outputs, correct errors, or repeat steps manually, the actual gain may be much smaller than expected.

This is one reason why AI pilots fail after early excitement. The visible speed of the tool hides the invisible cost of supervision and rework.

Warning Sign 4: Data Access Is Weak or Incomplete

AI systems are far less useful when they do not have access to reliable, timely business data. If a pilot operates without a trusted source of truth, its outputs may be inconsistent, incomplete, or difficult to use in decision-making.

When data access is weak, trust drops quickly. And once trust declines, adoption and ROI usually decline with it.

Warning Sign 5: No Clear Owner Is Responsible for Business Outcomes

Many pilots are launched by enthusiastic teams, but no one is clearly responsible for the business result. If ownership of the KPI, workflow redesign, and scale path is unclear, the pilot often loses momentum after the first burst of attention.

A pilot without clear accountability may continue as an experiment, but it is unlikely to become a value engine.

Warning Sign 6: There Is No Plan to Scale Beyond the Demo

A final warning sign is the absence of a realistic path to scale. The pilot may work in a limited environment, but if there is no plan for integration, governance, training, and workflow adoption, its impact will remain small.

This is where many organizations confuse a successful test with a scalable solution. A pilot that cannot move beyond the demo stage is already showing signs of the trap.

4. The AI ROI Risk Assessment Tool

Before funding or scaling an AI pilot, leaders need a simple way to test whether it is built for measurable value or drifting toward avoidable waste.

| Assessment Area | What to Ask | Score (1-5) |

|---|---|---|

| Business Value Fit | Is the pilot tied to a meaningful business outcome? | |

| Workflow Criticality | Does it address a real bottleneck in the workflow? | |

| Data Readiness | Does it rely on reliable, timely, and trusted data? | |

| Reliability Readiness | Can it perform consistently in real operations? | |

| Governance and Ownership | Are ownership and oversight clearly defined? |

Scoring Guide

| Total | Meaning |

|---|---|

| 21-25 | Strong ROI potential |

| 16-20 | Moderate potential |

| 10-15 | High pilot-trap risk |

| 5-9 | Likely budget waste |

This tool is not meant to produce perfect precision. Its purpose is to improve investment discipline before more time, budget, and trust are committed.

5. A Simple Example: Two AI Pilots, Two Very Different AI ROI Profiles

The value of the AI ROI Risk Assessment Tool becomes clearer when it is applied to real decisions. Two pilots may both look promising in a demo, yet have very different potential to create measurable business value. To make that difference more rigorous, this section uses a weighted ROI readiness calculation rather than a simple raw total.

How the Weighted Calculation Works

Not all evaluation factors matter equally. In this framework, dimensions that are more important to AI ROI receive greater weight.

The weighted formula is:

Weighted ROI Readiness Score = Σ (Score × Weight)

The weights used here are:

- Business Value Fit = 0.30

- Workflow Criticality = 0.25

- Data Readiness = 0.20

- Reliability Readiness = 0.15

- Governance and Ownership = 0.10

These weights add up to 1.00, which means the final weighted score remains on a 1 to 5 scale.

Pilot A: Impressive but Weak in AI ROI

The first pilot is a standalone meeting summarization assistant. It produces polished notes, saves some employee time, and receives positive feedback from users. On the surface, it looks successful.

But its ROI logic is weak. It is not tied to a major workflow bottleneck, it has no strong link to a business KPI, and it does not connect directly to core enterprise systems.

Scores

- Business Value Fit: 2

- Workflow Criticality: 2

- Data Readiness: 2

- Reliability Readiness: 3

- Governance and Ownership: 2

Weighted calculation

- 2 × 0.30 = 0.60

- 2 × 0.25 = 0.50

- 2 × 0.20 = 0.40

- 3 × 0.15 = 0.45

- 2 × 0.10 = 0.20

Weighted ROI Readiness Score = 2.15, or 2.2 when rounded.

This places Pilot A in a weak ROI position. It may be useful as a convenience tool, but its weighted profile shows that it lacks the business and workflow foundation needed to produce meaningful impact.

Pilot B: Narrower Scope, Stronger AI ROI Logic

The second pilot supports exception triage in a workflow where delays, rework, and missed handoffs already create measurable cost. It is tied to a specific process, connected to operational data, and evaluated against a clear KPI such as cycle time or error reduction.

This pilot may look less flashy than the first one, but its ROI foundation is much stronger.

Scores

- Business Value Fit: 5

- Workflow Criticality: 5

- Data Readiness: 4

- Reliability Readiness: 4

- Governance and Ownership: 4

Weighted calculation

- 5 × 0.30 = 1.50

- 5 × 0.25 = 1.25

- 4 × 0.20 = 0.80

- 4 × 0.15 = 0.60

- 4 × 0.10 = 0.40

Weighted ROI Readiness Score = 4.55, or 4.6 when rounded.

This places Pilot B in a strong ROI position. It addresses a real bottleneck, performs well on the most important dimensions, and has a much clearer path to measurable business value.

Why the Weighted Method Matters

This comparison shows why AI ROI should not be judged by technical performance alone. A simple raw total is useful, but a weighted method is more realistic because it gives greater influence to the factors that matter most, especially business value fit and workflow criticality.

That is why Pilot A and Pilot B are not just different in degree. They are different in logic. One is an interesting tool. The other is a value-ready initiative. In practice, that is what separates experimentation from measurable business impact.

6. Why Reliability Still Matters Even in an ROI-Focused Article

Even in an ROI-focused discussion, reliability matters because weak execution destroys value. A pilot may look strong in a demo, but if it performs inconsistently in live operations, its economic case quickly weakens. For operations and engineering leaders, this is not a side issue. It is a practical constraint on whether AI can be trusted inside real workflows.

Reliability Protects ROI

Reliable performance reduces rework, delay, and supervision. The more consistently a pilot performs under real operating conditions, the more likely it is to deliver measurable business value.

Weak Reliability Creates Operational Friction

An AI pilot can appear efficient while quietly adding burden to the system. If employees must repeatedly verify outputs, correct failures, or handle exceptions manually, the business absorbs hidden cost that erodes ROI.

Trust Depends on Consistency

In production environments, trust is earned through repeatable performance. If users cannot depend on the system, they will limit its use, add manual checkpoints, or bypass it altogether. Once that happens, scale and ROI both become difficult.

Reliability Is an Economic Condition

This article is centered on AI ROI, not reliability engineering. Even so, the point is simple: a pilot that cannot perform consistently in real workflows is unlikely to produce strong returns. Reliability is not the headline, but it is one of the conditions that makes ROI possible.

7. What Leaders Should Do Before Funding the Next AI Pilot

Before approving another AI pilot, leaders should apply more discipline at the front end. The goal is not to slow innovation. It is to make sure the initiative has a credible path to measurable business value.

Start With the Business Problem

The first question is not which tool to use. It is which business problem needs to be improved. A pilot should be tied to a real bottleneck, not just a visible task.

Define the KPI Before Launch

Leaders should identify the target metric before the pilot begins. If cost, cycle time, error rate, service level, or throughput is not clearly defined in advance, ROI will be difficult to prove later.

Check Data and Workflow Readiness

A pilot should be supported by trusted data and placed inside a workflow that matters. If the data is weak or the workflow is peripheral, the pilot may generate interest without creating much value.

Use the ROI Tool Before Expanding Spend

Before scaling a pilot, leaders should use the AI ROI Risk Assessment Tool to test its readiness. That creates a more disciplined basis for deciding whether the initiative should move forward, be redesigned, or be stopped.

Treat Pilots as Business Decisions, Not Technology Experiments

The strongest organizations do not fund AI pilots simply because the technology is promising. They fund them because the value logic is clear. That is the mindset required to avoid the AI Pilot Trap and build AI initiatives that can survive operational reality.

8. Conclusion

The AI ROI reckoning reflects a simple reality: enterprise AI is no longer judged by experimentation alone. It is judged by whether it improves cost, speed, quality, throughput, or margin in a measurable way. Many pilots appear promising in the early stage, but far fewer create lasting business value.

That is why leaders need to look beyond technical capability and ask harder questions. Does the pilot address a real workflow bottleneck? Is it tied to a clear KPI? Does it rely on trusted data? Can it perform reliably enough to support real operations? Without those conditions, even an impressive pilot can become an expensive distraction.

The central lesson is clear. AI pilots should not be funded because they are interesting. They should be funded because they have a credible path to measurable business value. The organizations that apply that discipline early will be far better positioned to avoid the AI Pilot Trap and build AI initiatives that can scale.

The next step is not simply to run more pilots, but to design the operating architecture that allows good pilots to become repeatable value engines.