Enterprise AI ROI Readiness Assessment

A step-by-step exercise for assessing enterprise AI ROI before scaling an AI pilot.

Is your AI pilot ready to deliver enterprise AI ROI?

Many AI pilots look promising at first, but far fewer deliver measurable enterprise AI ROI at scale. The problem is often not technical capability alone, but the lack of a disciplined way to judge whether a pilot is truly ready to improve a real workflow and justify further investment.

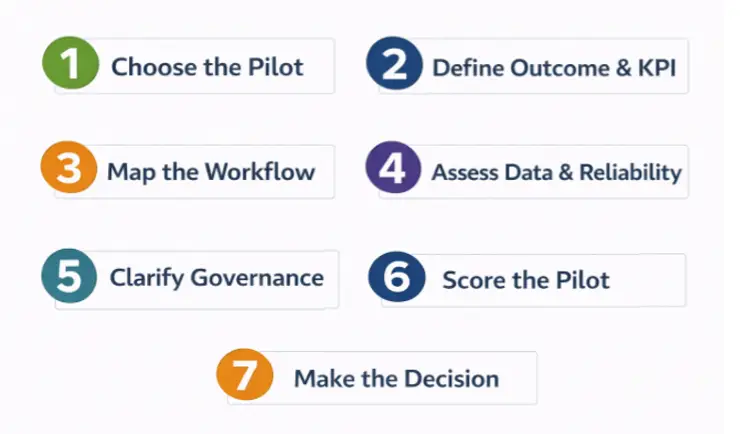

This practical exercise uses seven steps to assess enterprise AI ROI before scale: choose the pilot, define the outcome and KPI, map the workflow, assess data and reliability, clarify governance, score the pilot, and make the final decision. The goal is to determine whether the pilot should be scaled, redesigned, or stopped before more budget is committed.

Step 1. Choose One AI Pilot to Evaluate

The first step in assessing enterprise AI ROI is to choose one real AI pilot already being tested, proposed, or discussed in the organization. Focus on a specific use case tied to a real workflow, not a broad ambition or a demo-only idea.

A strong candidate usually addresses delay, rework, manual effort, or inconsistent decisions. Avoid pilots that are too vague, too experimental, or too small to justify serious evaluation. If the pilot is not connected to a meaningful business process, it will be difficult to generate measurable business value.

Use this sentence:

This pilot uses AI to __________ in order to improve __________.

Exercise

Fill in:

- Pilot name: ____________________

- What the AI does: ____________________

- Affected workflow: ____________________

Checkpoint

- Is this pilot tied to a real workflow?

- Is the workflow important enough to support enterprise AI ROI?

Output

Pilot Definition Sheet

Step 2. Define the Business Outcome and KPI

A strong enterprise AI ROI assessment begins with a clear business outcome and a measurable KPI. Define the result the pilot is expected to improve, then choose the metric that will show whether that improvement actually happens.

Focus on one business outcome that matters to the enterprise, such as lower cost, shorter cycle time, fewer errors, higher throughput, or better service. Then select one primary KPI that can be measured before and after the pilot. Avoid vague goals like improve efficiency or weak metrics like prompt volume or user curiosity.

Use this sentence:

The business outcome of this pilot is to improve __________, and the primary KPI is __________.

Exercise

Fill in:

- Target business outcome: ____________________

- Primary KPI: ____________________

- Current baseline: ____________________

- Target improvement: ____________________

Checkpoint

- Is the outcome stated in business terms, not technical terms?

- Does the KPI measure real business performance?

- Can it be measured before and after the pilot?

Output

Business Outcome Statement and KPI Definition Sheet

Step 3. Map the Workflow

To evaluate enterprise AI ROI, map the workflow the pilot is meant to improve. Identify where the pilot enters the process, where the bottleneck exists, and where delay, rework, or manual effort currently occur.

Do not assess the AI tool by itself. Assess the process around it. A pilot may look useful in isolation, but if it does not improve a real bottleneck in the workflow, its impact on enterprise AI ROI will remain limited.

Use this sentence:

This pilot enters the workflow at __________ and is expected to improve __________.

Exercise

Fill in:

- Workflow name: ____________________

- Where the pilot enters the workflow: ____________________

- Main bottleneck or delay point: ____________________

- Where rework or manual effort occurs: ____________________

Checkpoint

- Does the pilot improve a real bottleneck?

- Can you identify where delay, handoffs, or rework occur?

Output

Simple Workflow Map

Step 4. Assess Data and Reliability Readiness

Strong enterprise AI ROI depends on both trusted data and reliable execution. Identify the data the pilot needs, where it comes from, and whether it is timely, accurate, and connected to a clear source of truth. Then assess whether the pilot can perform consistently in real operations without creating hidden rework or excessive human checking.

A pilot may look strong in a demo, but weak data or inconsistent performance can quickly reduce business value. That is why data readiness and reliability readiness must be assessed together.

Use this sentence:

This pilot depends on __________ data from __________ and is reliable enough if it can consistently __________.

Exercise

Fill in:

- Main data source: ____________________

- Is the data timely and trusted? ____________________

- Where inconsistency is most likely to appear? ____________________

- What human checking may still be needed? ____________________

Checkpoint

- Does the pilot rely on a trusted source of truth?

- Can it perform consistently in real workflows?

- Will it reduce work, or add hidden supervision and rework?

Output

Data and Reliability Readiness Notes

Step 5. Assess Governance and Ownership

Clear governance is essential to strong enterprise AI ROI. Identify who owns the KPI, who oversees the pilot, and who will decide whether it should scale, be redesigned, or stop.

If ownership is unclear, the pilot may continue as a technical experiment without creating accountable business value. Enterprise AI ROI is much harder to achieve when no one owns the outcome.

Use this sentence:

This pilot is owned by __________, and the decision to scale or stop will be made by __________.

Exercise

Fill in:

- KPI owner: ____________________

- Pilot oversight owner: ____________________

- Who decides scale, redesign, or stop: ____________________

Checkpoint

- Is ownership clear?

- Is this being managed as a business initiative, not just a tech test?

Output

Governance and Ownership Sheet

Step 6. Score the Pilot

To judge enterprise AI ROI more rigorously, score the pilot across five dimensions: Business Value Fit, Workflow Criticality, Data Readiness, Reliability Readiness, and Governance and Ownership. Use a 1 to 5 scale, where 1 means weak readiness and 5 means strong readiness.

A simple total is useful, but a weighted score is often better because it gives more influence to the factors that matter most for enterprise AI ROI, especially business value fit and workflow criticality.

Use this sentence:

The overall readiness score for this pilot is __________ based on __________.

Exercise

Fill in:

- Business Value Fit: ____________________

- Workflow Criticality: ____________________

- Data Readiness: ____________________

- Reliability Readiness: ____________________

- Governance and Ownership: ____________________

Checkpoint

- Are the scores based on business value readiness, not demo quality?

- Do the highest weights reflect the factors that matter most for enterprise AI ROI?

Output

AI ROI Readiness Scorecard

Step 7. Apply the Exercise and Make the Decision

Now apply the full exercise to one real pilot and turn the assessment into a decision. The purpose is not just to produce a score, but to judge whether the pilot is truly ready to deliver enterprise AI ROI.

Use the information from Steps 1 through 6 to classify the pilot into one of four outcomes:

- Ready to Scale

- Needs Redesign

- High Pilot-Trap Risk

- Not Ready for Further Investment

A strong pilot is tied to a real workflow, measured by a meaningful KPI, supported by trusted data, reliable in operation, and owned by the right people. If those conditions are weak, the pilot may still look promising, but it is not ready to create measurable business value.

Use this sentence:

Based on this assessment, the pilot is classified as __________ because __________.

Exercise

Fill in:

- Final classification: ____________________

- Main reason for the decision: ____________________

- Recommended next action: ____________________

Checkpoint

- Does the evidence support the decision?

- Would leadership understand why this pilot should scale, be redesigned, or stop?

Output

Pilot Decision Summary

Common Mistakes That Weaken Enterprise AI ROI

Even a well-designed assessment can be weakened by a few common mistakes. Before finalizing the result, check whether the pilot suffers from any of the following problems: no meaningful KPI, weak workflow fit, poor data quality, low reliability, unclear ownership, or premature scaling.

These mistakes often make a pilot look more ready than it really is. In practice, weak enterprise AI ROI usually comes from poor workflow logic and operational readiness, not from lack of technical promise alone.

Use this sentence:

The main risk that could weaken enterprise AI ROI in this pilot is __________.

Exercise

Fill in:

- Most likely mistake or risk: ____________________

- Why it matters: ____________________

- What should be corrected first: ____________________

Checkpoint

- Are you confusing activity with value?

- Are you scaling before the pilot is operationally ready?

Output

Risk and Correction Notes

Final Outputs of This Exercise

By the end of this exercise, you should have:

- Pilot Definition Sheet

- Business Outcome Statement and KPI Definition Sheet

- Simple Workflow Map

- Data and Reliability Readiness Notes

- Governance and Ownership Sheet

- AI ROI Readiness Scorecard

- Pilot Decision Summary

- Risk and Correction Notes

Conclusion: Enterprise AI ROI Starts Before Scale

Many AI pilots fail not because the technology is weak, but because the value logic is weak. This exercise provides a practical way to assess enterprise AI ROI before more budget is committed.

From an Industrial Engineering perspective, the goal is not simply to test AI capability. It is to improve system performance through optimization, reliability, and systems thinking. A pilot should move forward only when the workflow matters, the KPI is clear, the data is trusted, the performance is reliable, and ownership is defined. That is the discipline that separates interesting experiments from value-ready enterprise AI initiatives.