A Deep Dive into Alibaba’s Qwen3 Stack: Architecture, Performance, and Implications

Beyond GPT-4? Alibaba’s Qwen3 Stack Aims for the Summit

A detailed look at Alibaba’s Qwen3 architecture, from trillion-parameter design to real-world benchmarks and deployment.

In the global race to build frontier AI, Alibaba just changed the pace.

While Western labs like OpenAI, Google DeepMind, and Anthropic follow carefully timed release cycles, Alibaba has taken a different approach. It dropped a full suite of advanced models all at once under its newly upgraded Qwen3 banner. This is more than an incremental update. It is a full-spectrum release, including a trillion-parameter large language model (Qwen3-Max), fully multimodal systems (Qwen3-Omni), real-time translators, safety tools, and advanced vision models.

With Qwen3, Alibaba is not simply trying to catch up. It is attempting to redefine what cutting-edge AI looks like, particularly in the areas of multilingual, multimodal, and specialized systems. The scale and variety of the release reflect a bold strategy focused on speed, specialization, and building a complete ecosystem.

This blog takes a detailed look at the full Qwen3 stack. It explores the architecture, performance benchmarks, and how this launch fits into the broader AI landscape. Whether Qwen3 proves to be a true frontier model or a strong regional alternative, one thing is clear. Alibaba is no longer following. It is stepping up to lead.

1. Overview of the Qwen3 Model Family

Alibaba didn’t just release a single model. It launched a full suite under the Qwen3 name, each version built for a specific purpose. Here’s a breakdown of the main models.

Qwen3-Max is the flagship with over 1 trillion parameters. It is built for complex tasks like coding, multi-step reasoning, and agent-style execution. A variant called Qwen3-Max-Heavy reportedly scores perfectly on math reasoning benchmarks.

Qwen3-Omni supports full multimodal input across text, images, audio, and video. It understands speech in 19 languages and generates output in 10. Its “thinking” versus “non-thinking” toggle allows applications to trade off between depth and speed of reasoning.

Qwen3-VL is designed for vision-language tasks such as image captioning and visual question answering. It is open source and performs competitively against some leading closed models in benchmark tests.

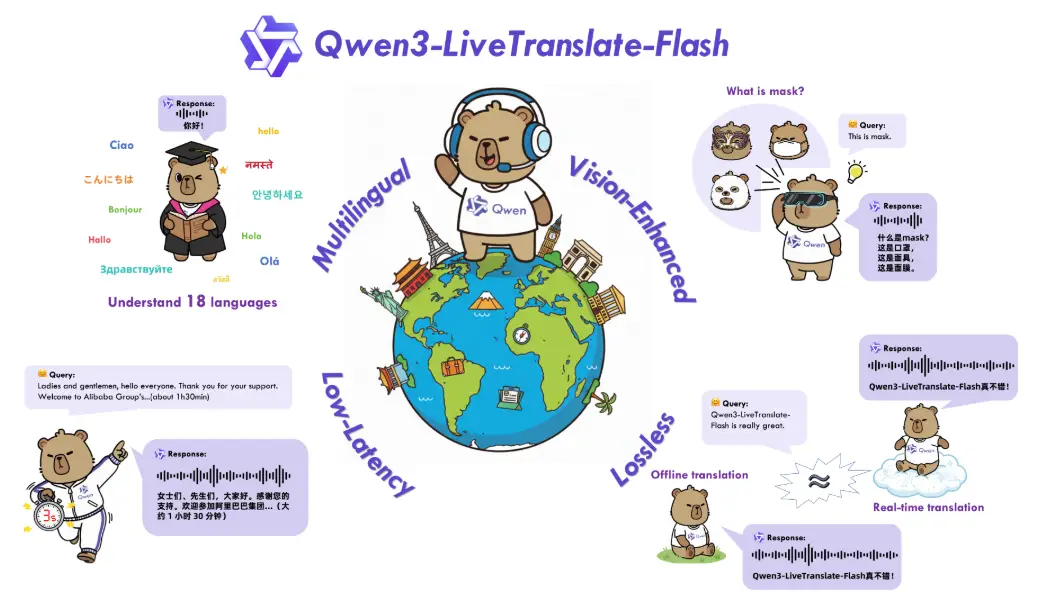

Qwen3-Coder handles code generation across multiple programming languages. Qwen3-Guard focuses on content moderation and safety enforcement. LiveTranslate-Flash delivers real-time speech-to-speech translation with strong accuracy and low latency.

Together, these models form a modular, end-to-end AI stack. Instead of relying on a single general-purpose model, Alibaba is investing in a specialized model ecosystem. The goal is not only to scale performance but to achieve broad coverage across key AI capabilities, including language, vision, speech, safety, and real-world deployment.

2. Qwen3 Architecture and Model Design

Qwen3 is not just a scaled-up language model. It’s a carefully designed stack of specialized systems, each engineered for efficiency, flexibility, and performance. While Alibaba hasn’t disclosed every detail, early performance signals and product documentation reveal key architectural principles that separate Qwen3 from earlier iterations and many Western counterparts.

Mixture of Experts at Scale

At the core of Qwen3-Max is a transformer architecture likely enhanced with a Mixture of Experts (MoE) framework. Instead of activating the entire network for each input, MoE uses routing layers to select a small set of expert subnetworks. This allows the trillion-parameter model to operate with significantly lower compute requirements during inference, without reducing its capacity to handle complex tasks.

Multimodal Input Routing

Qwen3-Omni handles multiple input types through a unified interface. Internally, the model uses modality-specific pathways to process text, image, audio, and video data. This routing system enables seamless cross-modal reasoning, allowing one model to move fluidly between media types without requiring separate processing pipelines.

Long-Context Window Support

Select Qwen3 models support context windows of over 100,000 tokens. This expanded capacity allows the system to work with long-form documents, multistep reasoning, large codebases, and detailed prompt chaining. The ability to retain and reason over extended inputs improves reliability in areas like legal analysis, software development, and agentic planning.

Thinking vs Non-Thinking Modes

A standout feature in Qwen3-Omni is its dual-mode inference: one optimized for deep reasoning, the other for fast response. The “thinking” mode prioritizes multi-step logic and contextual depth. The “non-thinking” mode favors speed and low-latency execution. This gives developers more control over how the model behaves depending on application needs.

Design Philosophy

The architectural vision behind Qwen3 focuses on scalability, modularity, and specialization. Rather than releasing one all-purpose model, Alibaba has created a framework where models can be optimized for specific tasks but still interact as part of a larger system. This strategy makes Qwen3 more adaptable and better suited for real-world deployment.

3. Multimodality in Qwen3

One of the standout capabilities of the Qwen3 stack is its ability to process and understand inputs across multiple modalities. This includes not only text, but also images, audio, and video. Qwen3-Omni is the central model that powers this functionality, combining these inputs into a single, unified system that supports more natural and flexible human-computer interaction.

Text, Image, Audio, and Video Input

Qwen3-Omni is designed to accept and interpret a wide range of inputs. It can read and generate text, describe or analyze images, interpret speech, and even process video clips. Each input type is routed through internal pathways that allow the model to understand and respond appropriately based on context. This allows for more fluid multimodal applications, such as voice-driven chat with visual feedback or video summarization with language-based outputs.

Language Coverage and Speech Capabilities

The model supports speech recognition in 19 languages and speech generation in 10, making it one of the most multilingual speech-capable AI systems available today. This feature is critical for real-time translation, customer service, and accessibility applications. Qwen3 can convert spoken language to text, understand the intent, and respond in spoken output, all within a single interaction loop.

Unified Interface and Modality Fusion

Qwen3-Omni doesn’t treat each modality as a separate task. Instead, it fuses them into a single model interface. This allows it to interpret prompts that mix text and visual elements or combine spoken and written instructions. The fusion of inputs leads to more natural outputs and greater contextual awareness across tasks.

Comparison to Other Multimodal Models

In early testing, Qwen3-Omni has shown strong results when compared to top-tier multimodal models like GPT-4o and Gemini 1.5 Pro. While third-party evaluations are still limited, internal benchmarks suggest that Qwen3 performs competitively across captioning, speech understanding, and video comprehension tasks.

Real-World Potential

The multimodal design in Qwen3 opens the door to a wide range of applications. From intelligent assistants that understand both voice and visual input, to content moderation tools that review images, videos, and text in a single pipeline, Qwen3 is engineered to support the next generation of user experiences.

4. Benchmarks and Performance Claims

Alibaba has made bold claims about Qwen3’s performance across a range of domains, including coding, math reasoning, visual tasks, translation, and safety. While many of these claims are self-reported, the available data paints a picture of a model suite that is both broad and competitive. This section breaks down the performance indicators we know so far.

Math and Reasoning

Qwen3-Max-Heavy reportedly achieves perfect scores on standard math reasoning benchmarks such as GSM8K and MATH. This level of performance places it in the same tier as models like Claude 3 Opus and GPT-4 Turbo. The ability to handle symbolic reasoning, multi-step logic, and structured problem solving suggests a significant leap forward for Chinese-developed models.

Code Generation

Qwen3-Coder is optimized for software development and technical problem solving. It performs well on benchmarks like HumanEval and MBPP, generating correct and syntactically valid code across Python, JavaScript, and other common languages. Alibaba’s own evaluations suggest strong performance in both code synthesis and completion tasks, though broader third-party validation is still pending.

Vision-Language Tasks

Qwen3-VL ranks high in open evaluations for image captioning, visual question answering (VQA), and object recognition. Benchmarks like COCO, VQAv2, and RefCOCO+ show that it matches or outperforms many closed models. It is positioned as the strongest open-source visual-language model currently available.

Real-Time Speech and Translation

LiveTranslate-Flash has demonstrated low latency and high translation accuracy across live speech in multiple languages. In internal testing, it has outperformed fast-response models like GPT-4o-Voice and Gemini-2.5-Flash, especially in handling rapid speaker transitions and maintaining semantic fidelity across languages.

Safety and Moderation

Qwen3-Guard is Alibaba’s dedicated safety model for content moderation. It has been fine-tuned on aligned datasets to detect and block harmful, biased, or policy-violating content. Benchmarks for safety are harder to quantify, but Alibaba claims high recall and low false-positive rates across multiple domains, including text, audio, and visual content.

Limitations and Caveats

While these results are impressive, many of the performance claims come from Alibaba itself. Independent evaluation is limited at this stage. Key questions remain about performance consistency, edge case reliability, and robustness in real-world deployment.

What the Benchmarks Suggest

Taken together, the early numbers show that Qwen3 is not just large — it is performing at or near the frontier in multiple categories. If these results hold up under public scrutiny, Alibaba may have produced the first truly global challenger to the dominance of Western AI labs.

5. Qwen3 in Production: Use Cases and Ecosystem

Qwen3 is not just a research effort. Alibaba is actively integrating these models into its cloud infrastructure, developer tools, and commercial services. This section looks at how Qwen3 is being used in real-world scenarios, how developers can access it, and what the broader ecosystem looks like today.

Integration with Alibaba Cloud

Qwen3 models are already available through Alibaba Cloud’s Model Studio platform. Users can deploy models directly via API, fine-tune them using prebuilt workflows, and connect them to other Alibaba services like data storage, analytics, and real-time communication tools. This integration allows enterprises to move from experimentation to production quickly.

Developer Tools and Access

Developers can interact with Qwen3 through REST APIs, SDKs, and graphical tools. Alibaba has emphasized usability by offering preconfigured endpoints for text generation, multimodal input, code synthesis, and translation. Fine-tuning support is also available, enabling domain-specific adaptation of base models for enterprise or niche use cases.

Open-Source Availability

Several Qwen3 models, including Qwen3-VL and Qwen3-Coder, have been released with open weights under the Apache 2.0 license. This allows for use, modification, and redistribution in both research and commercial contexts. It also encourages community-driven improvements and third-party benchmarking.

Real-World Deployments

Alibaba is already using Qwen3 internally across multiple business units. Use cases include customer service chatbots, product recommendation engines, real-time content moderation, and intelligent voice assistants for logistics and e-commerce. These applications help test Qwen3 models under real traffic and unpredictable user behavior, providing critical feedback for further refinement.

Ecosystem Strength

What makes Qwen3 viable at scale is not just the models themselves, but the surrounding infrastructure built to support them. From training pipelines and inference engines to safety filters and performance monitoring tools, Alibaba is positioning Qwen3 as part of a complete AI development environment, not just a standalone model release.

6. Strategic Implications

Qwen3 is more than a technical release. It reflects a shift in how Chinese labs approach AI development, deployment, and global competition. Alibaba’s rapid-fire rollout of advanced, specialized models sends a message not just to developers, but to the broader AI ecosystem.

Release Strategy Compared to Western Labs

While labs like OpenAI, Anthropic, and Google tend to follow slower, tightly controlled release cycles, Alibaba has gone in the opposite direction. The Qwen3 launch dropped multiple high-performance models at once, each tailored for a different domain. This aggressive schedule is designed to build momentum fast, capture developer mindshare, and push adoption across sectors.

Specialization Over Monoliths

Rather than relying on a single flagship model, Alibaba is embracing a modular approach built on specialization. Qwen3 is designed to handle vision, language, coding, translation, and moderation through focused submodels. This improves performance and efficiency, while offering more flexibility to enterprise users.

Domestic and Global Positioning

Qwen3 positions Alibaba as a serious contender in the global AI landscape. It also strengthens China’s domestic AI ecosystem, providing competitive models that reduce reliance on Western APIs and platforms. With open-source components and cloud-ready infrastructure, Alibaba is creating tools that can scale locally and compete globally.

Innovation Through Scale and Speed

By combining trillion-parameter architecture with real-time deployment tools, Alibaba is testing whether fast iteration can outperform slow perfection. The Qwen3 rollout strategy reflects a bet on speed, volume, and ecosystem strength as key drivers of AI innovation in the next phase of the race.

7. Conclusion: Is Qwen3 a Frontier Contender?

Qwen3 is not just a response to the AI leaders in the West. It is Alibaba’s bid to define what comes next. With a trillion-parameter model, strong multimodal capabilities, open-source components, and production-ready deployment, Qwen3 is a serious entry into the global AI landscape.

Strengths

Qwen3 stands out for its breadth, scale, and specialization. Its modular approach allows different models to excel in focused domains like coding, math reasoning, and real-time speech. The support for long-context reasoning, multilingual speech, and integrated safety systems shows a maturity that goes beyond experimentation. Combined with cloud deployment and open access for many variants, Qwen3 is well-positioned for real-world use.

Unknowns

Independent benchmarks and stress testing are still limited. Most performance data has come from Alibaba itself. Questions remain about the cost of running these models at scale, how safety systems behave in unpredictable scenarios, and whether the trillion-parameter Qwen3-Max will be broadly accessible to the public or remain limited to enterprise partnerships.

The Competitive Landscape

Qwen3 enters the market at a time when competition is intensifying. OpenAI, Google, and Anthropic are refining fewer, more generalized models. Alibaba is taking the opposite route, offering a full stack of domain-specific tools. This gives users more control and more options, but also increases the complexity of integration and maintenance.

Final Take

Qwen3 is the most complete and capable AI release to come out of China to date. It reflects a shift in strategy, from catching up to actively shaping the direction of the AI ecosystem. Whether or not it outperforms GPT-4 or Gemini on every metric, it is already pushing the boundary of what a global AI competitor looks like.