AI Moves Into the Workflow: Key AI Developments in Late April 2026

A practical look at frontier AI’s shift toward agent orchestration, multimodal perception, full-stack expansion, and secure infrastructure

What happens when AI companies stop competing only on model intelligence and start competing to control where work gets done?

The key AI developments in late April 2026 point to a new stage in the AI race. The focus is moving from isolated model performance to the systems that connect AI with real work.

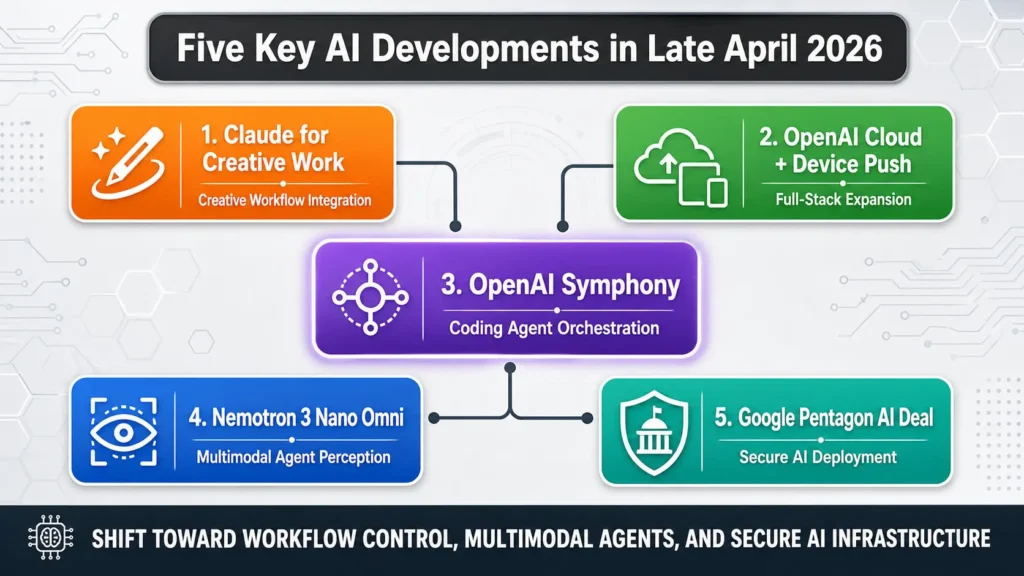

In late April, this shift became visible across several fronts. Anthropic moved Claude into creative workflows. OpenAI expanded across cloud access, coding-agent orchestration, and possible device-level interfaces. Nvidia introduced a multimodal model for agent perception. Google moved deeper into classified government AI deployment.

Together, these developments show that frontier AI companies are becoming platform operators, not just model providers. The future of AI will be shaped by the systems that connect intelligence to execution. This late-April brief continues the earlier discussion on key AI developments in early April 2026, where AI was already moving closer to real operational systems.

1. Five Key AI Developments in Late April 2026

Late April 2026 was not defined by one breakthrough model. It was defined by a broader move toward AI operating environments.

The major developments showed frontier AI moving into five important layers:

- Creative workflow integration

- Cloud and device-level expansion

- Coding-agent orchestration

- Multimodal agent perception

- Secure government and defense deployment

This matters because AI value is increasingly created where models meet real work. A powerful model alone is not enough. The surrounding workflow, tools, infrastructure, governance, and human review process determine whether AI becomes useful in practice.

In early April, the major theme was the rapid advance of AI capability. By late April, the focus had moved closer to execution. Frontier AI companies were embedding models into creative software, enterprise coding systems, multimodal perception pipelines, cloud platforms, and classified government infrastructure.

This suggests that the next stage of AI competition will be less about isolated model performance and more about operational control.

1️⃣ Anthropic expands Claude into creative workflows

On April 28, 2026, Anthropic launched Claude for Creative Work, adding new connectors designed for creative professionals. These connectors allow Claude to work more directly with tools such as Adobe Creative Cloud, Blender, Ableton, Affinity, Autodesk Fusion, SketchUp, Splice, Resolume Arena, and Wire. Anthropic described the move as a way to connect Claude to the platforms where creative work already happens.

The strategic meaning is important. Anthropic is not simply improving Claude as a chatbot. It is moving Claude deeper into professional workflows. This reflects a broader shift from “AI that answers questions” to “AI that participates in the work process.”

Reports around the same period also suggested that Anthropic’s private-market valuation had approached or hovered near the $1 trillion level. However, this should be described carefully as a reported private-market or secondary-market valuation signal, not as an officially completed $1 trillion funding round.

2️⃣ OpenAI releases Symphony for Codex orchestration

On April 28, 2026, OpenAI released Symphony, an open-source specification for Codex orchestration. Symphony turns a project-management board such as Linear into a control plane for coding agents. Each open task can be assigned to an agent, agents run continuously, and humans review the results.

This is a meaningful shift in software development. Instead of using AI only as a coding assistant inside a single chat window, Symphony points toward a system where AI agents are managed through existing enterprise workflow tools. In this model, the issue tracker becomes the operating layer, the coding agent becomes the worker, and human review becomes the governance checkpoint.

The broader implication is that AI software engineering is moving from “prompting” to orchestration. This is directly connected to the future of autonomous work, where the key problem is not just whether an agent can write code, but whether it can be assigned, monitored, restarted, reviewed, and integrated into a real delivery pipeline.

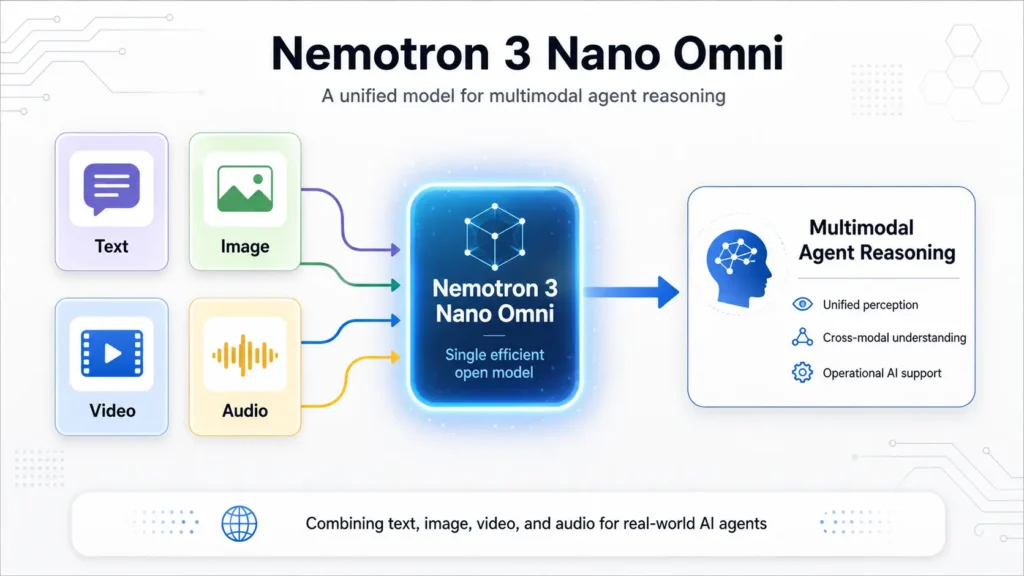

3️⃣ Nvidia introduces Nemotron 3 Nano Omni for multimodal agent perception

In late April 2026, Nvidia introduced Nemotron 3 Nano Omni, an open multimodal model designed to process images, video, speech, and text within a single architecture. Nvidia describes the model as a 30B-A3B hybrid mixture-of-experts model, meaning it has 30 billion total parameters but activates a smaller portion during inference.

This matters because real-world AI agents need more than text reasoning. They need to see, listen, interpret context, and respond with low latency. Nvidia’s technical report says Nemotron 3 Nano Omni can deliver up to 9x higher output throughput than Qwen3-Omni on long-video workloads under the stated test conditions.

The strategic meaning is that Nvidia is expanding beyond chips and infrastructure. It is also shaping the model layer that runs on top of its hardware. For agentic AI, unified multimodal perception may become a key requirement for enterprise, robotics, edge AI, and real-time operational systems.

4️⃣ OpenAI moves further toward cloud and device-level integration

OpenAI’s late-April story was not a single product launch. It was a broader signal that the company is moving toward a more vertically integrated AI platform.

On April 28, 2026, Reuters reported that OpenAI’s latest models and Codex coding agent became available through Amazon Bedrock, following changes to OpenAI’s earlier Microsoft cloud exclusivity arrangement. This made OpenAI models more accessible inside AWS enterprise environments and strengthened OpenAI’s position in cloud-based AI deployment.

At the same time, reports suggested that OpenAI was exploring AI-native hardware, including a possible smartphone effort involving Qualcomm, MediaTek, and other supply-chain partners, with mass production possibly targeted for 2028. This should be treated as a reported hardware direction, not as a confirmed product launch.

The larger pattern is clear. OpenAI is not positioning itself only as a model provider. It is extending across cloud distribution, coding agents, and potentially device-level AI interfaces. This supports the view that frontier AI companies are trying to control more of the full stack where AI value is delivered.

5️⃣ Google signs a classified AI agreement with the Pentagon

On April 28, 2026, reports said Google had signed a classified agreement with the U.S. Department of Defense that would allow its AI models to be used for “any lawful government purpose.” The agreement placed Google more directly inside the emerging market for secure government and defense AI infrastructure.

This development is part of a larger sovereign AI trend. By May 1, 2026, the Pentagon had also announced agreements with several major technology companies, including Google, OpenAI, Microsoft, Amazon Web Services, Nvidia, Reflection, and SpaceX, to support classified military AI systems.

The technical and strategic issue is not only model performance. It is governance, lawful use, human oversight, security, and institutional trust. As frontier models move into classified systems, the question becomes: who controls deployment boundaries, auditability, and accountability?

Key Insight from Five Developments

Taken together, the key AI developments in late April 2026 show that frontier AI competition is moving from model capability to environment ownership.

Anthropic is embedding Claude into creative workflows. OpenAI is expanding across cloud access, coding agents, and possible device interfaces. Nvidia is building multimodal perception models for agentic systems. Google is entering deeper classified government deployment. Symphony shows how AI agents may be governed through enterprise work-management tools.

The common pattern is that AI companies are no longer satisfied with being API providers. They are trying to own or influence the environments where AI work actually happens: the software stack, the cloud layer, the device interface, the agent-control system, and the secure institutional deployment channel.

For business leaders, this is the practical lesson: the next AI advantage will not come only from choosing the best model. It will come from designing the best AI operating environment, where models, tools, data, workflows, governance, and human review work together as one system.

2. The System Shift: Three Technical Characteristics Behind These Developments

Behind the key AI developments in late April 2026, one pattern is clear: AI competition is moving from model performance to system design. The harder question is no longer whether an AI model can generate impressive output. It is whether AI can be connected to real workflows, governed through reliable control layers, and deployed safely in high-stakes environments.

Three technical characteristics define this late-April shift.

2.1 Workflow Integration Becomes the New AI Interface

The first shift is from chatbot interaction to workflow integration.

Claude for Creative Work and OpenAI Symphony both show that AI is moving closer to the tools where work already happens. Instead of asking users to leave their workflow and interact with a separate chatbot, AI is being embedded into creative software, coding systems, project boards, and enterprise work environments.

This matters because AI value depends on placement. A strong model can still produce weak business results if it sits outside the real workflow.

Key implications:

- AI becomes part of the work environment, not a separate destination.

- Professional tools become AI interfaces, including design software, coding platforms, and project-management systems.

- Workflow context becomes a competitive advantage, because AI can understand tasks better when it is connected to the tools, files, and processes surrounding the work.

- Enterprise value comes from reducing handoffs, delays, and repeated manual coordination.

For enterprises, the lesson is practical: AI should be placed where work is actually delayed, repeated, reviewed, or handed off.

2.2 Agent Orchestration Replaces One-Time Prompting

The second shift is from one-time prompting to agent orchestration.

In a simple AI workflow, a user gives a prompt and receives an answer. In an agentic workflow, the system assigns work, monitors progress, coordinates tools, routes exceptions, and waits for human review when needed. This requires more than a smarter model. It requires an operating structure around the agent.

OpenAI Symphony is a good example. It turns issue trackers such as Linear into control planes for coding agents. The issue tracker is no longer just a task list. It becomes the system that assigns, organizes, and governs agent work.

Key implications:

- AI agents need task assignment, not just prompts.

- Human review becomes a governance checkpoint, not an afterthought.

- Project-management tools become control systems for autonomous work.

- Agent performance must be monitored across the full task lifecycle, from assignment to completion to review.

- The enterprise challenge shifts from using AI to managing AI work.

This is an important change. The question is no longer only, “Can the model solve this task?” The better question is: Can the system manage the agent safely and reliably from start to finish?

2.3 Multimodal and Secure Infrastructure Become Core Requirements

The third shift is from isolated AI tools to multimodal and secure infrastructure.

Nvidia’s Nemotron 3 Nano Omni points to the multimodal side of this shift. Real-world agents need to process more than text. They may need to interpret images, video, speech, documents, and real-time signals. For robotics, enterprise monitoring, manufacturing, security, and field operations, multimodal perception becomes a foundation for action.

Google’s Pentagon AI agreement points to the secure-infrastructure side. When AI moves into government, defense, and classified environments, model quality alone is not enough. The system must support lawful use, access control, auditability, human oversight, and institutional trust.

Key implications:

- Agents need multimodal perception to understand real environments.

- Text-only intelligence is not enough for many operational AI systems.

- Secure deployment becomes a core requirement in government, defense, finance, healthcare, and enterprise infrastructure.

- Governance must be designed into the system, not added after deployment.

- Trust depends on the full infrastructure, including access control, monitoring, audit logs, and human escalation paths.

This is why late April’s developments matter. They show that frontier AI is becoming embedded in the environments where work, security, and institutional decisions happen.

Section 2 Key Insight

The system shift behind late April’s AI developments can be summarized in three moves:

- AI is moving into workflows.

Models are being embedded inside the tools and platforms where people already work. - Agents are being orchestrated through control systems.

Issue trackers, project boards, and enterprise platforms are becoming management layers for autonomous AI work. - Multimodal and secure infrastructure are becoming essential.

Real deployment requires perception, governance, auditability, and trusted execution environments.

The deeper meaning is clear: the industry is moving beyond smarter models toward AI systems that can operate inside real work environments with structure, context, and control.

3. From Technology to Application: Where These Trends Will Be Used

The key AI developments in late April 2026 are not only technical announcements. They also point to where AI will create practical value next.

The common direction is clear: AI is moving closer to the environments where people create, code, monitor, decide, and execute.

3.1 Professional Workflow Automation

The first application area is professional workflow automation.

Claude for Creative Work and OpenAI Symphony both show that AI is moving into the tools where professionals already work. This includes creative software, coding systems, project boards, and enterprise work platforms.

In creative work, AI can support design, editing, music production, 3D modeling, and content development. The value is not only faster output. It is smoother movement from idea to draft, revision, and final production.

In software engineering, AI agents can be assigned to issues, generate code, prepare pull requests, update documentation, and support code review. Symphony points to a future where issue trackers become control systems for coding agents.

Likely applications include:

- Creative production workflows for design, media, audio, and 3D content

- Software development workflows for issue-to-code automation and bug fixing

- Project coordination through task boards, review checkpoints, and workflow status updates

- Documentation and knowledge work across files, tools, and team handoffs

The practical lesson is simple: AI creates more value when it is embedded into the workflow, not placed outside it as a separate chatbot.

3.2 Multimodal Operations and Real-World Agents

The second application area is multimodal operations.

Nvidia’s Nemotron 3 Nano Omni points to the growing need for AI agents that can process more than text. Real work often includes images, video, speech, documents, sensor data, and physical context.

This matters because many operational tasks cannot be solved with text reasoning alone. A field technician may need AI to interpret a photo, a manual, a spoken explanation, and a maintenance record at the same time.

A manufacturing system may need AI to combine machine signals, visual inspection data, operator notes, and historical repair records. A security system may need to interpret video, audio, alerts, and written reports together.

Likely applications include:

- Manufacturing monitoring through visual inspection, machine data, and maintenance records

- Robotics and edge AI where agents need real-time perception

- Field service support using photos, manuals, diagrams, and voice input

- Healthcare and clinical support where images, notes, and records must be interpreted together

- Security and monitoring systems that combine video, audio, alerts, and operational logs

The key point is that real-world agents need perception. They must understand the environment before they can act reliably.

3.3 Secure Infrastructure and Full-Stack AI Deployment

The third application area is secure infrastructure and full-stack AI deployment.

OpenAI’s cloud expansion and reported device-level ambitions show that AI companies want to reach users through more layers of the technology stack. Google’s Pentagon AI agreement shows a parallel move into secure government and defense environments.

For enterprises, this means AI deployment will increasingly depend on trusted infrastructure. Models must connect to cloud platforms, enterprise data, identity systems, access controls, and audit logs.

For government and defense, the requirements are even stricter. AI systems must support lawful use, human oversight, secure access, traceability, and institutional accountability.

Likely applications include:

- Enterprise AI deployment through secure cloud platforms

- AI-native devices that make AI interaction more continuous and context-aware

- Government document analysis for search, summarization, and classification

- Defense and administrative workflows involving planning, logistics, and reporting

- Sovereign AI infrastructure where security, governance, and national requirements matter

The practical issue is trust. In high-stakes environments, the question is not only whether AI can produce useful output. The question is whether the whole system can be controlled, monitored, audited, and governed.

Section 3 Key Insight

The practical applications of late April’s AI developments can be grouped into three areas:

- Professional workflow automation

AI moves into creative tools, coding systems, and project workflows. - Multimodal operations and real-world agents

AI agents begin to understand text, image, video, speech, and operational context together. - Secure infrastructure and full-stack deployment

AI becomes embedded in cloud platforms, devices, enterprise systems, and government infrastructure.

The deeper lesson is that AI adoption is moving from tool usage to system embedding.

The organizations that benefit most will not simply choose the strongest model. They will design the best environment for AI to work safely, reliably, and productivelyhat benefit most will not simply choose the strongest model. They will design the best environment for AI to work safely, reliably, and productively.

4. Conclusion: Late April Shows AI Moving Into the Workflow Layer

For business leaders, the late-April signal is practical: AI strategy must start with where work actually breaks down.

A company may have access to a strong model, but value is lost if tasks still move through slow handoffs, disconnected tools, unclear approval steps, weak data quality, or manual rework.

This is where the AI Value Gap becomes visible.

Many organizations adopt advanced AI tools but leave the surrounding workflow unchanged. The result is familiar: impressive demonstrations, limited operational impact, and growing difficulty proving ROI.

The better approach is to design AI around the work system.

That means asking:

- Which task should AI support or execute?

- Which data source should it trust?

- Which tool should it use?

- Who approves the output?

- How are errors, exceptions, and risks handled?

- How is performance measured after deployment?

These questions move AI adoption from experimentation to operational design.

They also explain why late April matters. The leading AI companies are not only releasing better models. They are building the layers that make AI usable inside real work: workflow interfaces, agent orchestration, multimodal perception, cloud access, device channels, and secure deployment environments.

The final takeaway is clear.

The next AI advantage will belong to organizations that connect intelligence to execution with structure, governance, and measurable outcomes.

This pattern also connects to the broader AI Value Gap: many organizations adopt advanced AI tools but fail to redesign the surrounding workflow. It also reinforces the need for AI agent reliability and a trusted ERP-Agent Bridge when AI begins to act inside enterprise systems.