Scaling Agentic AI Systems: Governance, Reliability, and Operating Discipline Beyond the Pilot Stage

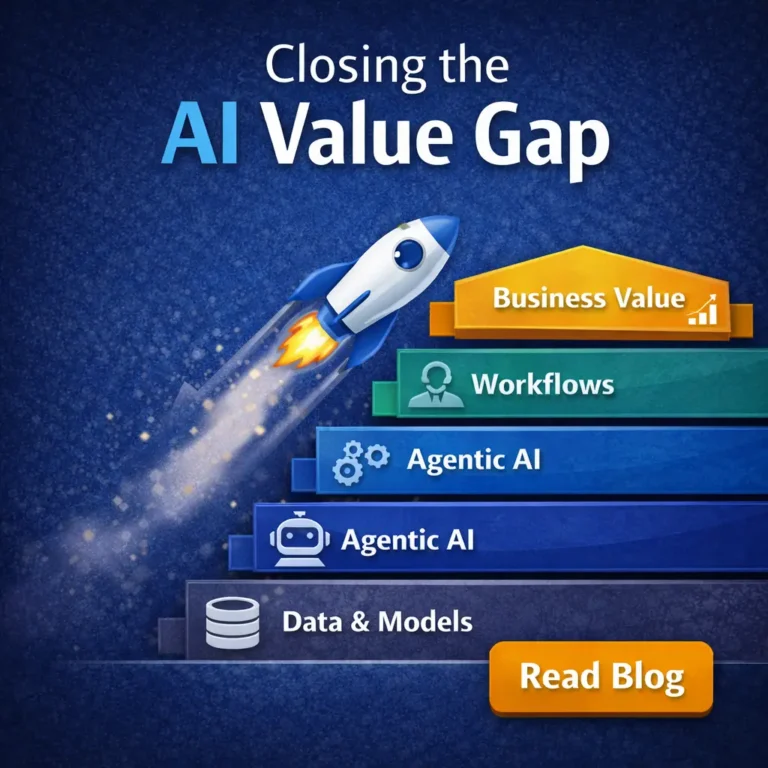

This blog is part 4 of 4 in the series : “The AI Value Gap: Scaling Transformation and Agentic Innovation.“

Engineering the Bridge Between LLM Autonomy and ERP/CRM Systems of Record.

How do you move Agentic AI systems beyond “pilot purgatory” and into a state where they can safely modify live business state without constant human “babysitting”?

The transition from experimental prototypes to production-grade Agentic AI systems represents a fundamental shift in enterprise architecture, moving from static “Systems of Record” toward autonomous “Systems of Action”. In this new paradigm, software is no longer merely a tool for human input but an intelligent ecosystem capable of reasoning, planning, and executing multi-step workflows across the enterprise backbone. For engineers and architects, the challenge lies in bridging the gap between probabilistic Large Language Model (LLM) outputs and the deterministic requirements of ERP, CRM, and workflow infrastructures.

Engineering these systems for the live environment requires a lean agentic layer that interfaces directly with the data layer of core systems, governed by rigorous oversight and human-in-the-loop controls. By shifting the focus from simple prompt-following to a robust architectural “bridge,” organizations can resolve the “maintenance trap,” where agents require more human hours to fix than they save, and achieve true operational autonomy. This blog explores the high-level engineering discipline required to move Agentic AI systems beyond “pilot purgatory” and into a state of governed, reliable execution at scale.

1. Architectural Failure Modes: Why Agentic AI Systems Break in Production

The transition from a controlled sandbox to the live enterprise environment often exposes a harsh reality: many agentic AI projects struggle to reach durable production value. Gartner has predicted that over 40% of agentic AI projects may be scrapped by the end of 2027 because of rising costs, unclear business value, and immature implementation. For engineers, scaling Agentic AI systems requires moving beyond simple prompt-following and addressing the following critical failure modes:

- The Maintenance Trap and Logic Drift: Many systems work well in demos but “crash and burn” when handling actual business processes because they rely on static instructions. In production, businessrules, SOPs, exceptions, and approval policies can change over time; without controlled updating, versioning, evaluation, and governance, agents can become difficult to maintain, requiring more human hours to “reprogram” than they save.

- Context Decay in Long-Running Workflows: A major concern for enterprises is whether AI agents remain stable, transparent, and trustworthy over time. High-level engineering must solve for “Context-Continuity,” the ability to maintain complex business logic and state over multi-day, multi-step processes without triggering a system-wide collapse or losing the objective mid-stream.

- The “Ugly Stepchild” Integration Gap: Many developers treat ERP applications as unwieldy legacy technology rather than core enablers. This leads to disconnected systems where an agent might process a CRM lead in seconds, but the organization still waits hours for a human to manually key that data into the ERP, effectively neutralizing productivity gains.

- Fragility of Visual-Based Automation: While breakthroughs like “Desktop Intelligence” allow agents to interact with legacy software by “seeing” the screen, this approach is inherently fragile. A simple UI update in the underlying ERP or CRM software can confuse the agent, making API-based “clean core” integrations far more reliable for background automation.

- The Coordination Theater of Siloed Agents: Failure often occurs when agents lack a modular, intelligent mesh that allows them to synchronize state across disparate platforms. Without a secure, governed bridge, agents remain “expensive advisors,” capable of analysis but fundamentally incapable of executing the meaningful system updates required in a production environment.

To solve these issues, architects are pivoting toward a hybrid approach that blends neuro-symbolic reasoning, where agents follow defined business rules (symbolic) while maintaining the flexibility to navigate edge cases through LLM intelligence (neural).

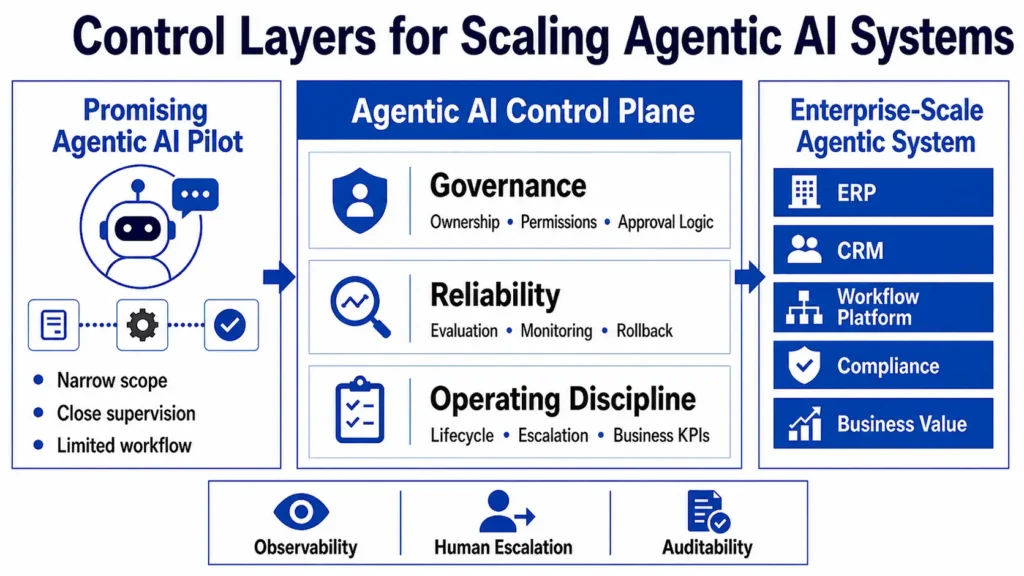

2. Governance as a Technical Control: Securing Agentic AI Systems

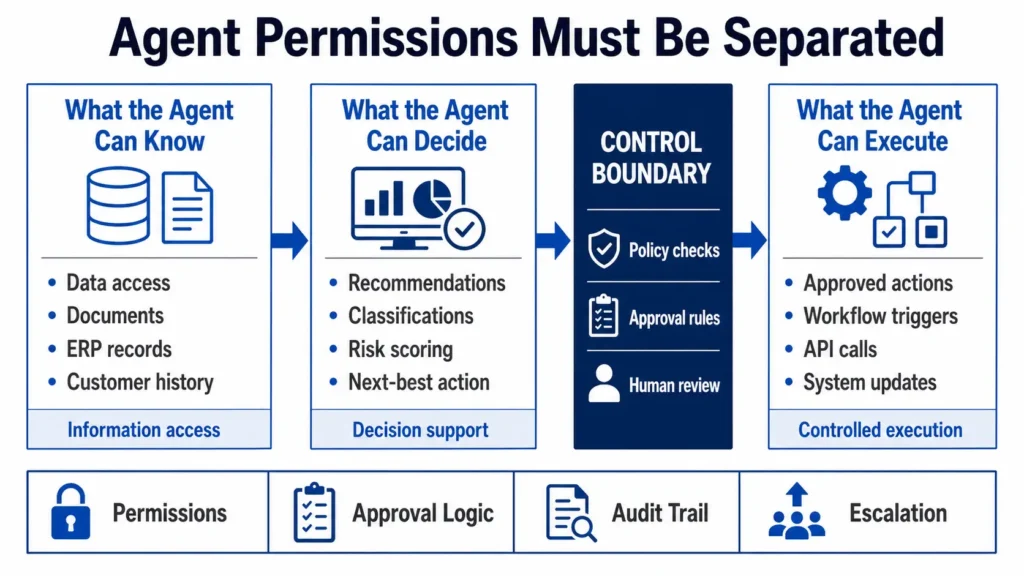

For the systems architect, governance is not a bureaucratic hurdle but a foundational technical constraint that dictates the system’s “blast radius.” When Agentic AI systems move from providing answers to executing transactions, the security model must shift from protecting data at rest to securing autonomous identities in motion.

- Non-Human Identity (NHI) Scoping: Every agent must be treated as a first-class non-human identity with its own verifiable credentials. By assigning unique service accounts or IAM roles rather than allowing agents to inherit broad user permissions, engineers can track, rotate, and revoke access without disrupting the broader ecosystem.

- The Least-Privilege Execution Model: Security scales when it follows cloud-native principles. Permissions should be attached only to the specific tools an agent needs, such as “write access to invoice table,” ensuring that even if the agent’s reasoning is influenced via prompt injection, it physically cannot access sensitive datasets or powerful administrative APIs.

- Tool Abstraction and Schema Enforcement: For production write actions, agents should avoid direct database access and operate through governed APIs, validated tools, or workflow services. Instead, they must operate through a tool abstraction layer that exposes discrete system actions via strict JSON schemas. This acts as a circuit breaker, validating that agent outputs are deterministic and type-safe before they are committed to the system of record.

- Auditability and Provenance: In high-stakes environments, every autonomous decision must be logged for compliance. Provenance and audit trails help support accountability, regulatory review, and user rights related to automated decision-making, especially under GDPR-style requirements and financial-control regimes such as SOX.

- Hardwired Explainability: Governance teams must deploy multidisciplinary checks to ensure agent outputs align with organizational values. This involves building automated “guardrail” models that evaluate agent plans against ethical benchmarks and business-specific constraints before execution.

By treating governance as an integrated layer of the architecture, rather than a retrofit, developers ensure that Agentic AI systems are not only capable but also trustable and auditable in a production setting.

3. Reliability Engineering: Chaos Testing and Self-Healing in Agentic AI Systems

Reliability in the era of Agentic AI systems is as much about quality and accuracy as it is about traditional availability. When inference sits on the “hot path” where every millisecond affects business trust, engineers must evolve the standard Site Reliability Engineering (SRE) toolbox to account for non-deterministic failure modes.

- Chaos Engineering for Multi-Agent Systems (LLM-MAS): Traditional testing is often insufficient for detecting emergent behaviors or cascading faults in complex agent interactions. By proactively injecting controlled disruptions, such as agent communication failures, network latency, or resource exhaustion, engineers can identify vulnerabilities in production-like environments before they lead to system-wide collapses.

- Self-Healing and Autonomous Recovery: Production-grade agents must move beyond simple error logging to “Self-Healing” mechanisms. This involves engineering agents that can autonomously identify, diagnose, and recover from broken CRM triggers, API timeouts, or data entry anomalies without triggering a manual intervention.

- AI-Specific Observability and Drift Monitoring: Standard uptime metrics are no longer enough; a system may appear “healthy” while its predictions degrade silently. Reliability engineering for Agentic AI systems requires monitoring for “silent model degradation,” tracking accuracy, bias, and hallucination rates as core operational KPIs.

- Intelligent Exception Handling: Rather than simply failing when a business rule is triggered, reliable agents must be engineered for intelligent routing. This means the agent gathers all relevant state data and context for the “edge case” and routes it to a human supervisor, ensuring the workflow continues even when the AI reaches its reasoning limits.

- Resilient Integration Chaining: Agents often trigger multiple sequential steps across ERP and CRM stacks. Ensuring reliability across these chains requires a secure, governed bridge that validates each step end-to-end, preventing a single flawed decision from corrupting real downstream systems.

By adopting these reliability patterns, developers can ensure that Agentic AI systems pass the “Monday morning test,” delivering the consistent performance required for mission-critical enterprise operations.

4. The Control Plane: Orchestration and Lifecycle of Agentic AI Systems

The “control plane” of the enterprise serves as the centralized management layer where Agentic AI systems are versioned, monitored, and synchronized. Transitioning from experimental silos to a unified agentic infrastructure requires a structured approach to how these intelligent entities discover tools and retain state across the digital core.

- Standardizing Connectivity with MCP, the Model Context Protocol (MCP) acts as a universal “USB port,” provides a standardized way for AI applications to connect to external tools and data sources, reducing custom integration work.

- Context Persistence and Long-Term Memory, MCP can help agents access external context and tools, but long-term memory, workflow state, and business-rule persistence require separate state-management and governance layers.

- Multi-Agent Orchestration (MAO), enterprises are moving away from single-threaded automation toward “agent squads” where specialized agents collaborate on complex tasks and share real-time memory.

- The Enterprise Knowledge Graph, organizations are building structured maps of business logic, data relationships, and context to serve as the “cognitive backbone” for agent reasoning.

- Lifecycle Management Systems, these platforms act as “DevOps for AI workers,” supporting agent versioning, testing, and simulation to ensure continuous improvement without manual reprogramming.

- Outcome-Centric Monitoring, as orchestration matures, outcome-centric monitoring shifts attention from adoption metrics, such as user seats, toward business outcomes such as cases closed, cycle time reduced, or forecasts generated.

This architectural layer ensures that Agentic AI systems are not isolated scripts but integrated components of a broader, self-optimizing enterprise mesh.

5. Production Readiness: A Technical Checklist for Scaling Agentic AI Systems

Moving Agentic AI systems from a successful pilot to full-scale operations requires a final layer of technical validation to ensure the transition from probabilistic reasoning to deterministic system updates is seamless. Engineers must verify that the following mechanisms are in place to manage the high-stakes environment of live ERP and CRM data:

- High-Fidelity Data Mapping and Validation, developers should utilize libraries like Pydantic or structured output mechanisms to enforce strict JSON schemas on agent responses, ensuring they align with the rigid requirements of ERP APIs.

- Intelligent Exception Handling and Routing, rather than allowing an agent to fail silently, the architecture must include logic to gather full state context and route “edge cases” to a human supervisor for final approval.

- Autonomous Recovery Mechanisms, the system must be verified for its ability to “self-heal” by detecting and recovering from common operational failures, such as API timeouts or data entry anomalies, without human intervention.

- Identity Scoping and Privilege Audits, every agent identity (NHI) must be audited to ensure it operates under the principle of least privilege, preventing unauthorized role assumption or full account compromise.

- Runtime Behavior Baselines, engineering teams should establish a behavioral baseline for each agent category, triggering alerts on deviations such as unusual API calls or unexpected network egress to external destinations.

- Compliance and Auditability Hardwiring, for regulated workflows, agent actions, approvals, inputs, outputs, and policy checks should be logged in a tamper-resistant audit trail appropriate to the applicable compliance regime.

6. Conclusion: Achieving Operational Excellence with Agentic AI Systems

The era of “babysitting” experimental AI is ending; the next stage of the enterprise is defined by Agentic AI systems that function as dynamic, self-directed software capabilities. For architects and engineers, this transformation is not just about choosing the smartest model, but about building the governed, reliable, and secure bridge between probabilistic AI and the deterministic core of the business. By shifting from feature-led development to capability-led design, organizations can finally move past “pilot purgatory” and compete on the speed and scale of autonomous value realization. Scaling successfully requires a new operating philosophy where trust is hardwired, outcomes are orchestrated, and reliability is engineered at the core.