AI Agent Reliability: What NIST, OpenAI, and Google Say About Dependable Agents

Smart models are not enough. Reliable AI agents need evaluations, guardrails, observability, and operational control.

If your AI agent can take real actions, who decides whether it is safe enough to trust?

Developers care about AI agent reliability. They know an AI agent must do more than perform well in a demo. It must follow policies, use tools correctly, respect approval boundaries, and remain dependable in real workflows.

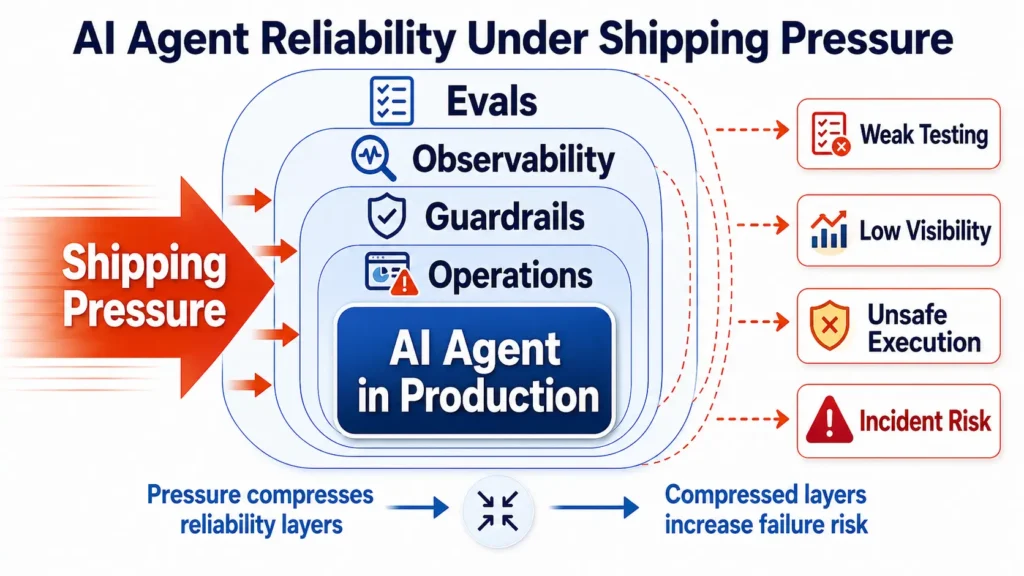

The problem is that reliability work is often compressed by shipping pressure.

Evaluation sets remain thin. Guardrails are added late. Observability is postponed. Human approval gates are discussed, but not always built into the execution path. As a result, an AI agent may look impressive in testing but fail when connected to enterprise data, APIs, customers, or internal operations.

This is why AI agent reliability is different from traditional software reliability. AI agents reason probabilistically, select tools, retrieve information, and act across multi-step workflows. Their failures are harder to predict, test, and trace.

NIST, OpenAI, and Google are pointing toward the same direction: dependable AI agents need evaluations, observability, guardrails, controlled execution, and operational monitoring.

This is also where the AI Value Gap becomes visible: the distance between an impressive AI agent demo and a dependable production system that can be trusted inside real workflows.

The core issue is not only model intelligence. It is system dependability.

Because if teams are too busy for AI agent reliability today, they may soon be busy with incidents tomorrow.

1. Why AI Agent Reliability Still Breaks Down in Practice

AI agent reliability does not usually break down because developers ignore it. It breaks down because agentic systems create new failure patterns while delivery pressure leaves too little time to evaluate, observe, and control them properly.

Traditional software usually follows predefined logic. AI agents are different. They can interpret goals, retrieve information, select tools, call APIs, and act across multiple steps. That means the reliability problem is no longer only about whether the code works. It is also about whether the agent behaves safely inside a real workflow.

Several practical reasons explain why AI agent reliability still breaks down:

• AI agents behave probabilistically

The same or similar input may not always produce the same result. This makes testing, debugging, and regression control harder.

• Tool use expands the failure surface

An agent may query databases, call APIs, update records, or trigger workflows. Reliability depends on whether it chooses the right tool, uses the right data, and follows the right permissions.

• Multi-step workflows compound small errors

A small mistake early in the workflow can create a larger problem later. The agent may retrieve the wrong information, make a weak assumption, and then take an action based on that mistake.

• Policy boundaries are easy to blur

A reliable agent must know when to act, when not to act, when to ask for approval, and when to escalate to a human. Without clear boundaries, the agent may complete the task but violate the process.

• Failure definitions are often too weak

It is easy to ask, “Did the agent complete the task?” It is harder to ask, “Did it complete the task correctly, safely, and within policy?”

• Ownership is fragmented

AI agent reliability often sits between model teams, application teams, security teams, data teams, and operations teams. When no one owns the full workflow, important risks can fall through the gaps.

• Observability is added too late

Without traces, logs, metrics, and workflow history, teams may not know why the agent made a decision, what tool it used, or where the failure began.

• Execution risk appears after deployment pressure increases

Many risks become visible only when the agent is connected to real systems. A chatbot that gives a weak answer may create inconvenience. An agent that updates a procurement record, changes an ERP field, sends a customer response, or triggers an internal workflow can create operational damage.

This is why AI agent reliability cannot be treated as a final checklist before deployment. It must be designed into the system from the beginning.

The better question is not only, “Does the agent work in a demo?”

It is, “Can this AI agent behave dependably when it reasons, selects tools, and takes action inside a real workflow?”

2. What NIST, OpenAI, and Google Are Converging On About AI Agent Reliability

NIST, OpenAI, and Google approach AI agent reliability from different directions, but their message is becoming similar: reliable AI agents need more than strong model performance. They need a system that can evaluate behavior, observe execution, enforce boundaries, and manage risk after deployment.

NIST provides the risk-management frame. Its AI Risk Management Framework emphasizes governance, risk mapping, measurement, and management. For AI agents, this means teams should define risks early, assign ownership, measure failure modes, and manage reliability as part of the operating system around the agent.

OpenAI focuses more directly on agent workflows. Its guidance emphasizes evaluations, traces, guardrails, and observability. These controls help teams understand how an agent reasons, what tools it calls, where it fails, and when human review is needed.

Google points in the same direction for enterprise agents. Its approach emphasizes evaluating not only the final response, but also the agent’s trajectory, including tool use, intermediate steps, and workflow behavior.

The convergence is clear. AI agent reliability requires five practical capabilities:

• Evaluation

Teams need test sets, regression checks, edge-case scenarios, and workflow-level scorecards. The goal is to test not only the final answer, but also the path the agent followed.

• Observability

Teams need traces, logs, metrics, and dashboards that show what the agent did, what tools it used, what data it retrieved, and where the workflow changed direction.

• Guardrails

Agents need policy rules, permission limits, validation checks, and approval gates so they do not complete tasks in unsafe or unauthorized ways.

• Controlled execution

The more an agent can act, the more carefully its actions must be bounded. Sending a message, updating a record, approving a transaction, or triggering a workflow should not carry the same level of control.

• Operational monitoring

Reliability does not end at launch. Agents need alerting, incident review, rollback plans, and continuous improvement as workflows, data, policies, and users change.

The important shift is this: AI agent reliability is not only a model-quality problem. It is a system-dependability problem.

NIST gives the risk frame. OpenAI highlights agent workflow controls. Google emphasizes evaluation across responses and trajectories. Together, they point to one conclusion: dependable AI agents require a reliability architecture around the model.

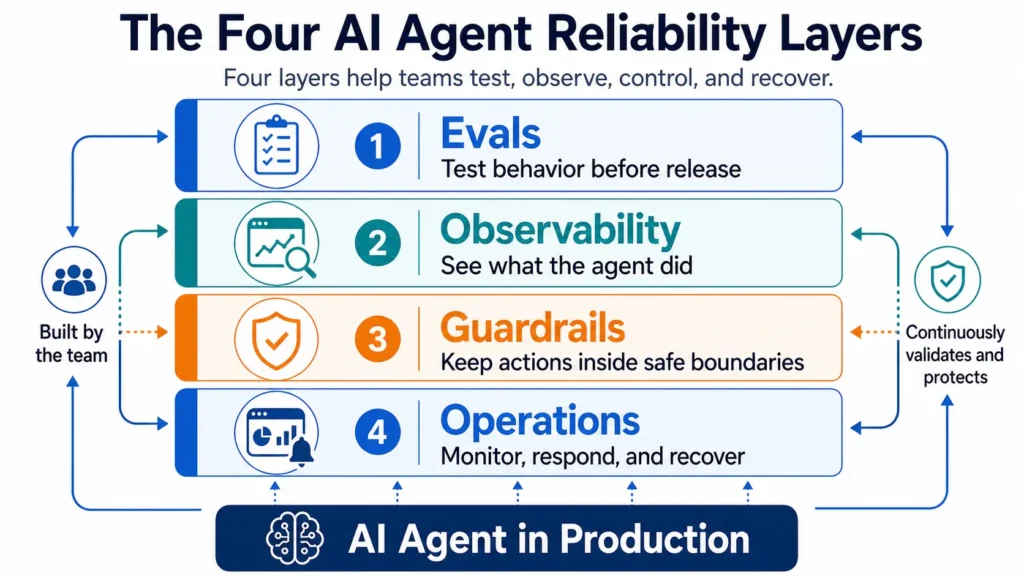

3. The Four AI Agent Reliability Layers Teams Actually Need

AI agent reliability cannot depend on a final review before launch. Teams need four practical layers around the agent: evaluations, observability, guardrails, and operations. Each layer catches a different type of risk.

• Evals: Testing Whether the Agent Behaves Correctly

Evaluations check whether the agent completes the task correctly, safely, and within policy.

For AI agents, this means testing more than the final answer. Teams also need to test whether the agent understood the user’s intent, used the right data source, selected the correct tool, followed approval rules, and avoided unsupported actions.

Useful evals include test sets, regression checks, edge-case scenarios, and agent scorecards. The key question is not only, “Was the answer good?” It is also, “Did the agent reach that answer through a reliable path?”

• Observability: Seeing What the Agent Actually Did

Observability gives teams visibility into the agent’s behavior. Without it, teams may see the final output but miss the hidden workflow behind it.

A production agent needs traces of model calls, tool calls, retrieved data, system responses, retries, failures, and escalations. These records help teams understand why the agent made a decision and where a failure began.

Observability turns the agent from a black box into an inspectable workflow.

• Guardrails: Keeping the Agent Inside Safe Boundaries

Guardrails define what the agent can do, cannot do, and must escalate.

This includes input checks, output validation, permission limits, approval gates, policy rules, and human review for high-risk actions. Guardrails are especially important when an agent can update records, send messages, trigger workflows, or interact with enterprise systems.

The goal is not to slow innovation. The goal is to prevent the agent from acting beyond its authority.

• Operations: Managing Reliability After Deployment

AI agent reliability does not end when the system goes live. Production agents need ongoing monitoring, alerting, incident review, rollback workflows, and continuous improvement.

Operations answers practical questions: Who is notified when the agent fails? Can the workflow be paused? Can a bad action be rolled back? How are incidents translated into better evals, guardrails, or monitoring rules?

This matters because workflows, data, policies, tools, and users change over time. A reliable agent must be managed as a living production system.

Why These Layers Matter Together

Each layer protects the system in a different way.

Evals test behavior.

Observability reveals what happened.

Guardrails prevent unsafe action.

Operations help teams respond and improve.

A smart model may produce useful answers. But a dependable AI agent needs a system around it that can test, observe, control, and recover.

4. A Concrete Enterprise Failure Example

Consider an AI agent assigned to support procurement operations. A manager asks the agent to review a supplier request and prepare an approval recommendation. The task sounds simple, but the workflow depends on several hidden reliability conditions.

The agent must retrieve the correct supplier record, check contract terms, compare pricing history, review budget limits, detect policy exceptions, and decide whether human approval is required. If any step is weak, the final recommendation may look reasonable but still be wrong.

The failure may begin quietly.

• Without evals, the team may never test enough procurement edge cases, such as expired contracts, duplicate vendors, unusual price increases, or approval thresholds.

• Without observability, no one may see that the agent used an outdated contract file or skipped a required pricing comparison.

• Without guardrails, the agent may recommend approval even though the request exceeds its authority or requires legal review.

• Without operations, the company may not have a clear alert, rollback, or incident process when the wrong recommendation reaches the procurement team.

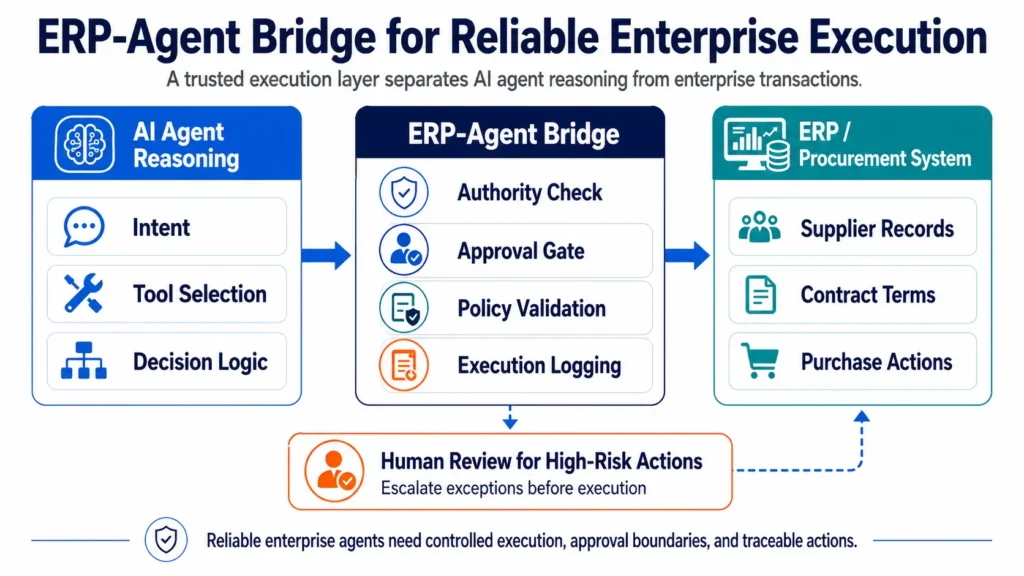

This is where the ERP-Agent Bridge becomes important. AI agents need a trusted execution layer between agent reasoning and enterprise transactions. The bridge helps define which data source is authoritative, which actions require approval, which tool calls are permitted, and how exceptions should be escalated before the agent changes real business records.

In this example, the problem is not that the model is useless. The problem is that the system around the model is too weak to verify, control, and recover from the agent’s actions.

That is why dependable AI agents need reliability layers before they are trusted with real operational work.

5. Why AI Agent Reliability Is More About System Dependability Than Model Intelligence

It is tempting to frame AI agent reliability as a model problem. If the model becomes smarter, the agent should become more reliable. But in production, that is only partly true.

A smarter model can still fail inside a weak system.

If the data source is outdated, the approval rule is unclear, or the tool permission is too broad, the agent may produce a confident decision that still creates risk. The problem is not only what the model knows. It is also what the system allows the agent to see, decide, and do.

This is why AI agent reliability should be treated as a dependability problem. A dependable system does not assume every action will be correct. It is designed to prevent unsafe actions, detect weak signals, explain what happened, and recover when something fails.

For enterprise AI agents, intelligence is only one layer. The larger question is whether the full system is measurable, visible, bounded, and recoverable.

That is the shift leaders need to understand: the future of reliable AI agents will not be decided by model intelligence alone. It will be decided by the reliability architecture built around the model.

6. What Developers, Engineers, and CEOs Should Do Next

AI agent reliability is not owned by one role. It requires different questions from different people.

• Developers should instrument the workflow

Developers need to make agent behavior visible. That means tracing model calls, tool calls, retrieved data, intermediate decisions, failures, retries, and escalations. If an agent fails, the team should be able to answer what happened, where it happened, and why it happened.

• Engineers should design for controlled execution

Engineers need to treat the agent as part of a larger production system. That means defining tool permissions, approval gates, fallback paths, rollback options, and monitoring rules. The goal is not only to make the agent useful, but to make its actions bounded and recoverable.

• CEOs should ask deployment-level questions

CEOs do not need to inspect every technical detail, but they should ask the right reliability questions before deployment. What can this agent actually do? What systems can it access? What actions require human approval? How do we know when it fails? Who owns incident response?

The key point is simple: AI agent reliability is not a feature added at the end. It is a shared responsibility across development, engineering, operations, and leadership.

7. Conclusion: Too Busy for AI Agent Reliability Means Too Busy With Incidents Later

AI agents are moving from conversation to action. They can retrieve data, call tools, trigger workflows, and support real business decisions. That makes reliability more important, not less.

The lesson from NIST, OpenAI, and Google is clear: dependable AI agents require evaluations, observability, guardrails, controlled execution, and operational monitoring.

Teams may feel too busy to build these layers today. But when an AI agent acts on the wrong data, skips an approval step, or triggers the wrong workflow, the time saved during deployment can quickly become time spent on incidents.

If teams are too busy for AI agent reliability today, they may soon be busy with incidents tomorrow.