Agentic Systems at Scale: Key AI Developments in Late March 2026

Subagents, computer use, memory, and operational economics are reshaping enterprise AI

What happens when AI stops acting like a model and starts operating like a system?

The key AI developments in late March 2026 suggest that this transition is accelerating. In early March, the focus was on execution-oriented AI. By late March, the shift became more concrete. New releases and announcements showed AI systems beginning to coordinate subagents, interact directly with software environments, maintain persistent memory, and operate under tighter cost constraints.

These changes are not incremental improvements in model capability. They reflect a deeper transformation in how AI systems are structured. Instead of relying on a single model to generate outputs, AI is increasingly built as a coordinated system that decomposes tasks, manages state across steps, integrates with tools, and adapts during execution.

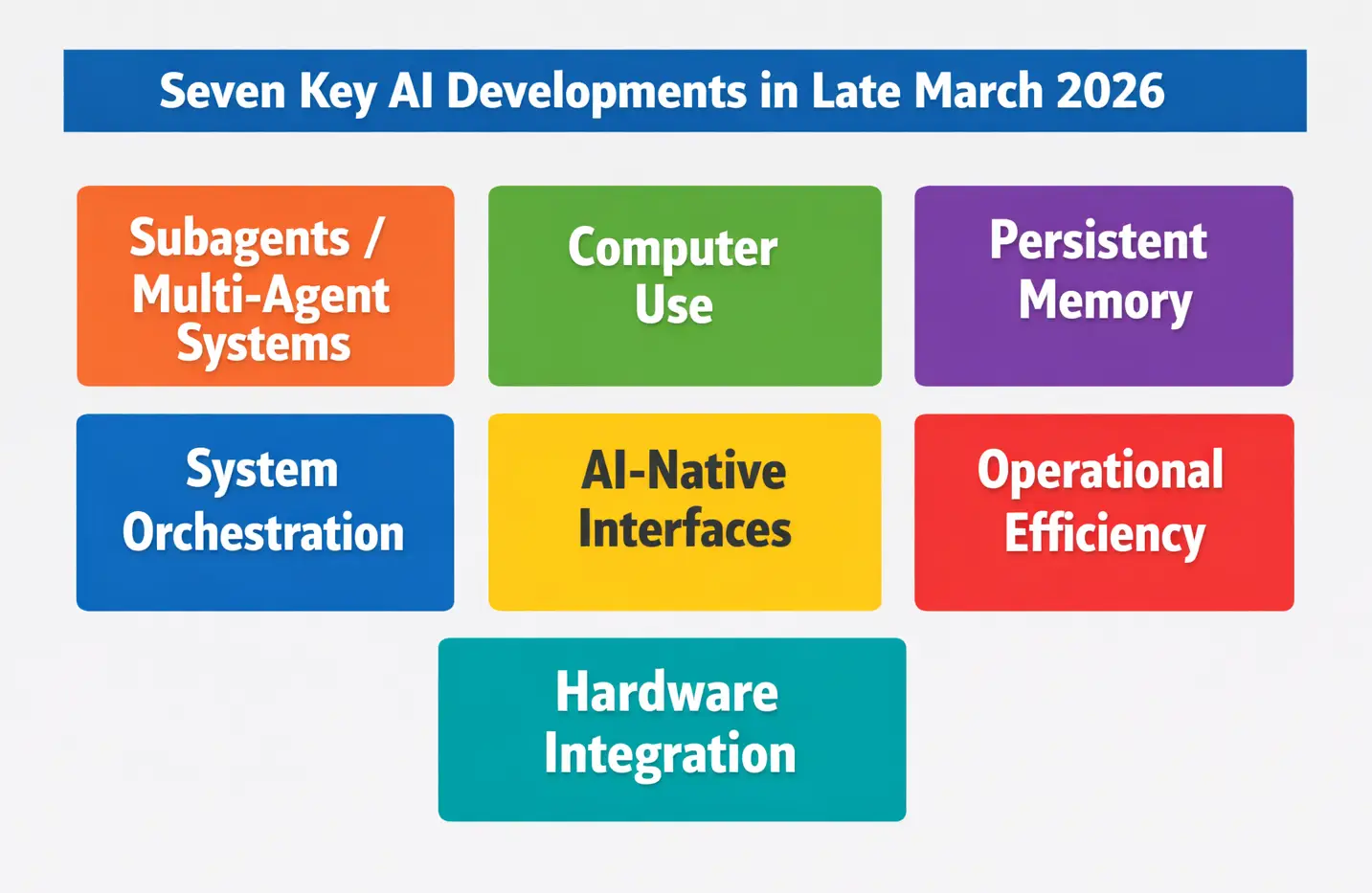

This blog examines the key AI developments in late March 2026 through this lens. It introduces seven important technological developments, explains the five technical characteristics behind them, and explores where these changes are likely to shape real-world AI applications.

1. Seven Key AI Developments in Late March 2026

The key AI developments in late March 2026 show that AI is no longer evolving mainly through larger models or benchmark improvements. Instead, progress is increasingly driven by how systems coordinate agents, memory, tools, and execution under real-world constraints. The following developments highlight this shift.

1️⃣ Subagents and Multi-Agent Architectures

In mid-March, OpenAI introduced subagents within its development tools, while Anthropic expanded task continuity through Claude Code workflows. Technically, this reflects a move from single-model pipelines to multi-agent architectures, where tasks are decomposed into specialized roles such as planning, execution, and verification.

This approach improves modularity and parallelism. In practice, it enables more scalable automation in software development, research workflows, and complex enterprise processes where tasks cannot be handled in a single step.

2️⃣ Direct Computer Use and Software Interaction

Between March 20 and March 31, Anthropic and Perplexity advanced AI systems that can directly control software environments. Instead of generating instructions, these systems can operate interfaces, execute commands, and complete tasks inside real applications.

Technically, this introduces action capability as a core function of AI systems. It reduces the gap between decision and execution, making AI more suitable for operational workflows such as support automation, data processing, and system administration.

3️⃣ Persistent Memory as a System Layer

On March 23, Supermemory demonstrated significant improvements in long-term agent memory, showing that memory can be managed as a structured system component rather than a simple context extension. This enables AI systems to maintain state across multiple steps and sessions.

From a technical perspective, this changes how workflows are designed. Instead of reloading context for each task, systems can build continuity over time. This is particularly important for applications such as document analysis, research assistance, and long-running enterprise processes.

4️⃣ Orchestration as a Core System Function

As subagents, memory, and tool use expand, the main challenge shifts to orchestration. Late-March developments across OpenAI, Anthropic, and related tools show that coordinating multiple components is now a central engineering problem.

Technically, orchestration determines how tasks are scheduled, how agents communicate, and how errors are managed. Systems with poor orchestration may fail even if individual components perform well. This makes orchestration a key factor in scaling AI beyond isolated use cases.

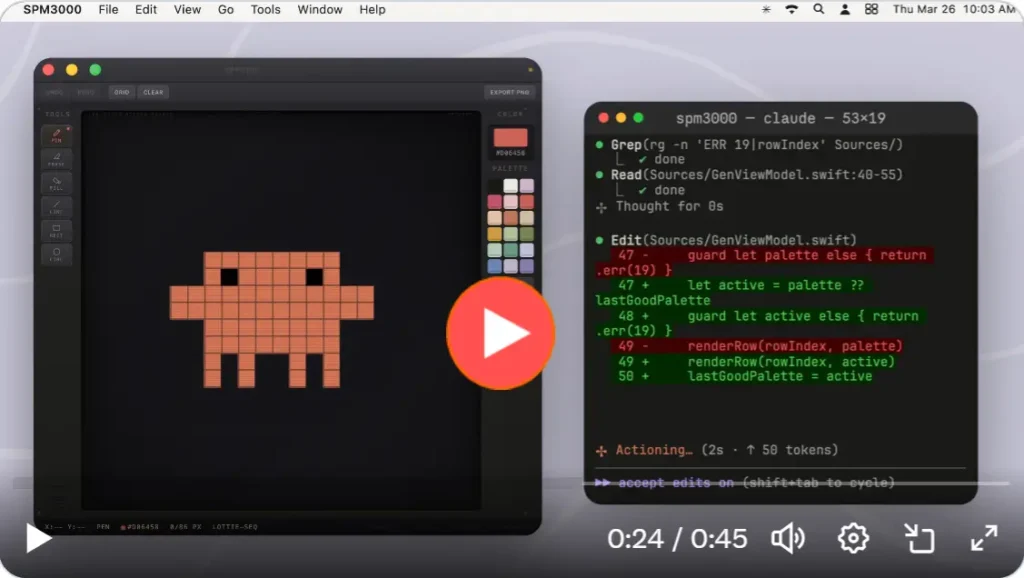

5️⃣ AI-Native Development Interfaces

Around March 19–20, tools such as Cursor Composer 2 and Google Stitch introduced new development paradigms based on intent-driven design. Instead of writing code step by step, developers can define objectives and rely on AI systems to generate and refine solutions.

This reflects a shift toward AI-mediated development environments, where the role of the developer moves from implementation to orchestration. The result is faster iteration and potentially lower development cost, especially for complex or repetitive tasks.

6️⃣ Operational Economics and Efficiency

By late March, multiple announcements emphasized efficiency rather than capability. Google’s TurboQuant highlighted memory compression techniques, while tools like Cursor focused on reducing cost per task.

Technically, this introduces economic constraints as part of system design. Performance is no longer measured only by accuracy, but also by latency, memory usage, and cost. This is critical for enterprise deployment, where scalability depends on sustainable operating cost.

7️⃣ Integration of Hardware and Agentic Systems

At NVIDIA GTC (March 17) and subsequent robotics-related developments, the industry showed increasing integration between AI systems and hardware platforms. Agent-based AI is being connected with robotics, accelerators, and real-time environments.

This signals a move toward end-to-end systems that combine perception, decision, and action. It expands AI applications beyond software into physical systems such as logistics, manufacturing, and robotics.

Section Synthesis

Taken together, these developments show that late March 2026 marks a transition toward system-level AI. The defining change is not a single breakthrough, but the integration of multiple capabilities into coordinated systems that can act, adapt, and scale.

The seven developments discussed above can be summarized as a shift toward coordinated, system-level AI capabilities.

2. The System Shift: Five Technical Characteristics Behind These Developments

The key AI developments in late March 2026 point to five technical characteristics that now define AI progress at the system level.

- Multi-Agent Decomposition: AI systems are increasingly organized as specialized agents that divide planning, execution, verification, and memory across multiple roles.

- Persistent Memory and Context Management: Memory is becoming a system layer that maintains continuity across tasks instead of relying only on larger context windows.

- Tool and Environment Integration: AI is moving from generating outputs to directly operating inside software tools and digital environments.

- Execution Feedback Loops: Reliable AI now depends on monitoring, validation, and correction across multi-step workflows.

- Orchestration Efficiency as the New Metric: Latency, throughput, memory use, and cost per task are becoming as important as model capability.

Section Synthesis

Taken together, these characteristics show that AI is no longer advancing mainly as a standalone model. It is evolving into a coordinated system designed to execute, adapt, and scale under real operating constraints.

3. From Technology to Application: Where These Trends Will Be Used

The key AI developments in late March 2026 matter because they expand where AI can be applied in real workflows, not just how well models perform in isolation.

AI Coding and Software Development

Multi-agent design and AI-native interfaces are making coding, testing, debugging, and interface generation faster and more modular.

Enterprise Workflow Automation

Tool integration and orchestration efficiency are making AI more useful for structured business processes such as approvals, reporting, and document routing.

Customer Support and Operations

Persistent memory and feedback loops enable AI systems to handle more complex service tasks with greater continuity and reliability.

Knowledge Work and Research

Long-running tasks such as document synthesis, analysis, and technical investigation benefit from systems that can preserve context across multiple steps.

Robotics and Physical Systems

The convergence of hardware and agentic systems is extending AI into robotics, logistics, and other real-time operational environments.

Industrial Perspective

From an Industrial Engineering standpoint, these applications matter because they reduce bottlenecks, improve continuity, and increase system-level efficiency.

Section Synthesis

Late-March AI technologies matter because they make AI easier to embed into real systems of work across software, operations, research, and industry.

4. A Short Industrial Perspective

The key AI developments in late March 2026 indicate that AI performance is increasingly constrained by system-level factors such as coordination overhead, state management, and cost efficiency rather than model capability alone.

Optimization

The primary optimization target shifts to system throughput, where coordination delays, task dependency, and resource allocation across agents and tools become critical bottlenecks.

Reliability

System reliability depends on maintaining stability across multi-step execution, where feedback loops, error propagation control, and state consistency determine overall performance.

Systems Thinking

AI systems must be designed as integrated architectures, where agents, memory, tools, and interfaces interact as a unified system rather than isolated components.

Industrial Implication

The main opportunity lies in redesigning workflows to minimize variability, reduce cycle time, and improve end-to-end process efficiency using coordinated AI systems.

Section Synthesis

Late-March AI advances matter because they align technical capability with system-level performance requirements in real operational environments.

5. Conclusion: What Late March Reveals About the Next Phase of AI

The key AI developments in late March 2026 show that AI is advancing beyond standalone model improvement and toward coordinated system design. Subagents, persistent memory, tool integration, feedback loops, and orchestration efficiency all point to a phase in which performance depends increasingly on how well systems execute under real constraints.

This matters because the next competitive frontier is no longer defined only by model capability. It is defined by how effectively AI systems can coordinate action, maintain continuity, and scale with acceptable cost, latency, and reliability.

From a technical and industrial perspective, late March clarified the direction of travel. The future of AI will be shaped less by isolated breakthroughs and more by systems that can operate as part of real workflows, software environments, and physical processes.

In that sense, late March 2026 did not simply add new features to AI. It showed more clearly how AI is becoming a system technology.