The ERP-Agent Bridge for Agentic AI

AI Value Gap Series | Part 3 of 4

From Source of Truth to Controlled Autonomous Execution

What should an AI agent trust before it takes action inside the enterprise?

The ERP-Agent Bridge is the execution architecture that answers that question. In production environments, enterprise state is distributed across ERP transactions, master data services, workflow platforms, operational databases, spreadsheets, and human-managed exceptions. The challenge is not simply system connectivity. It is determining which records are authoritative, how freshness and consistency are validated, and where execution boundaries must be enforced.

At that point, enterprise AI becomes a control problem, not just a model problem. An agent may interpret intent, retrieve context, and recommend an action, but it should not move directly from reasoning to execution. The ERP-Agent Bridge separates those layers by grounding action in validated business data, policy controls, approval logic, and auditable system interaction.

This article builds on the broader AI Value Gap framework, extends the workflow perspective introduced in AI enterprise architecture, and connects to the scaling problem discussed in AI ROI Reckoning. Its focus is the technical structure of trusted autonomous execution.

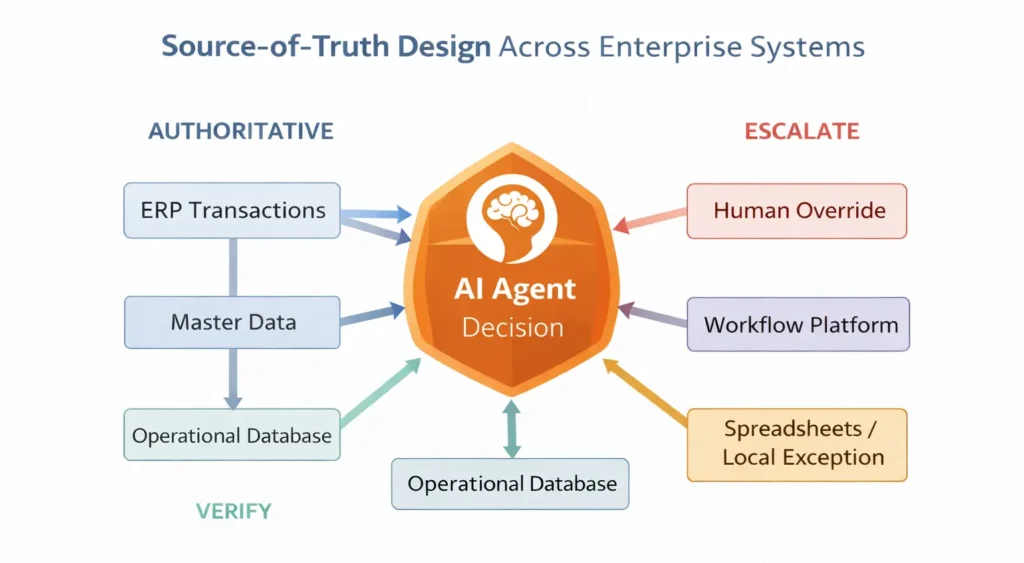

1. Why Source of Truth Is a Systems Design Problem

In production environments, enterprise truth rarely resides in one clean system. Business state is distributed across ERP transactions, master data services, workflow platforms, operational databases, spreadsheets, and human-managed exceptions. The challenge is not just data access, but determining which system is authoritative for a specific decision.

Figure 2 shows why source of truth is not a single database, but a decision problem across enterprise systems.

At the center of the figure, the AI Agent Decision represents the point where the agent must determine whether the available information is reliable enough to support action. The surrounding systems show that enterprise truth is distributed across multiple sources, and the agent should not treat them equally.

The figure groups these systems into three functional roles. Authoritative systems such as ERP Transactions and Master Data provide the primary records for many business decisions. Verification systems such as the Workflow Platform and Operational Database confirm status and execution state. Escalation sources such as Spreadsheets / Local Exception and Human Override handle cases where standard system truth is incomplete, inconsistent, or overridden.

The main point is straightforward: an enterprise agent should not ask only where data resides. It must determine which system has authority, what must be verified, and when execution must be escalated. In that sense, source of truth is not just a data problem. It is a control problem the ERP-Agent Bridge must solve before action is allowed.

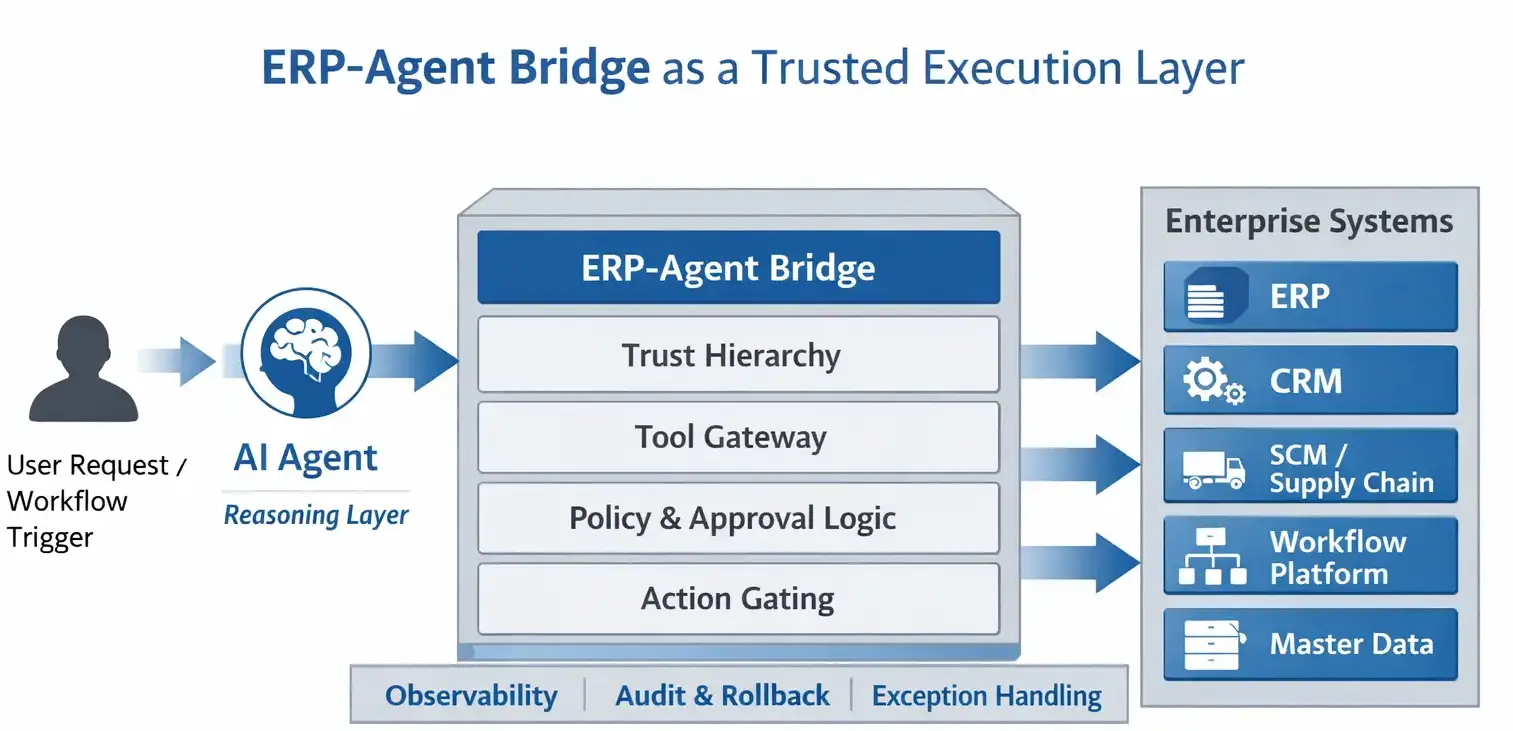

2. The ERP-Agent Bridge as an Execution Architecture

Enterprise integration gives an agent access to systems, not permission to act correctly. A connector can expose ERP transactions, workflow APIs, or master data services, but it does not determine whether a requested action is valid against current business state. Once an agent moves from retrieval to mutation, the system must validate authority, freshness, policy constraints, and transaction eligibility before execution.

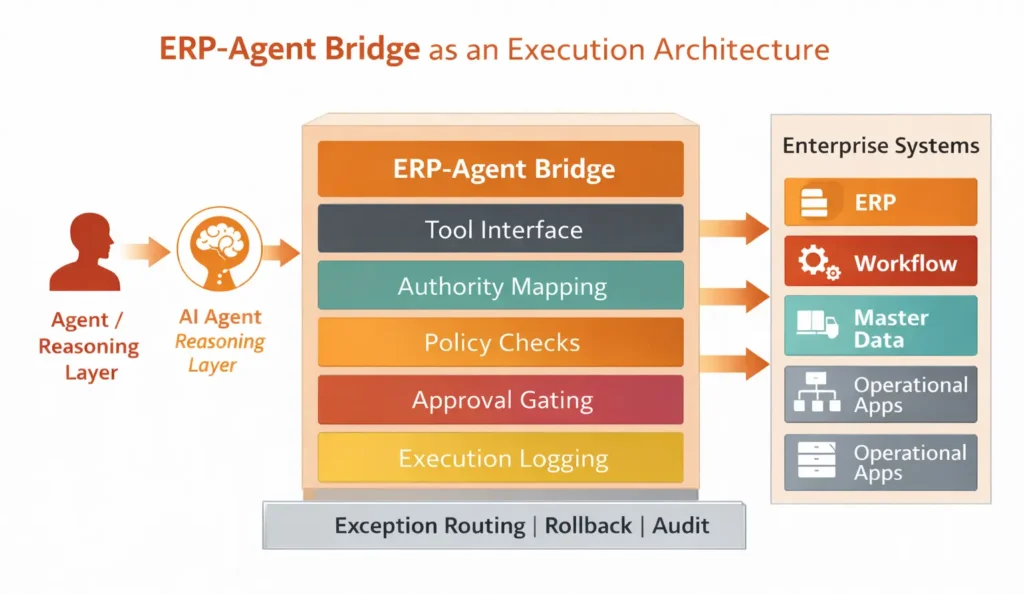

Figure 3 shows how an AI agent is prevented from acting directly on enterprise systems. On the left, the Agent / Reasoning Layer interprets intent and proposes the next step. On the right, Enterprise Systems represent the operational platforms the agent may interact with, including ERP, workflow, master data, and other business applications.

At the center, the ERP-Agent Bridge serves as the controlled layer between reasoning and execution. Every intended action passes through this bridge before it reaches a business system. The bridge defines tool access, maps authority, applies policy checks, enforces approval logic, and records execution.

At the bottom, Exception Routing | Rollback | Audit represents the safeguards that make the architecture suitable for production. The main point is clear: the ERP-Agent Bridge is not just an integration layer. It is the control plane that constrains, validates, and records how agent intent becomes business action.

3. Separating Reasoning from Execution

As AI agents move from analysis to action, the ERP-Agent Bridge must ensure that model recommendations do not become direct enterprise transactions. It is whether the enterprise can control how that recommendation becomes a real transaction. In production systems, reasoning and execution cannot be treated as the same layer.

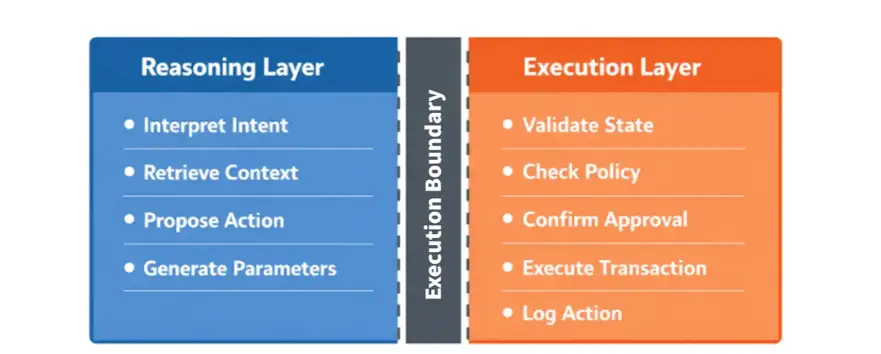

Figure 4 shows the architectural split between what the AI agent is allowed to reason about and what the enterprise system is allowed to execute.

On the left, the Reasoning Layer represents the model-facing side of the workflow. This is where the agent interprets user intent, retrieves context, proposes an action, and generates the parameters needed for the next step. These are valuable capabilities, but they still belong to reasoning rather than execution.

On the right, the Execution Layer represents the system-facing side. Before any transaction is allowed to occur, the system must validate current state, check policy rules, confirm whether approval is required, execute the transaction only if all conditions are satisfied, and then log the action. This layer is deterministic and governed by business controls.

The vertical bar in the middle, labeled Execution Boundary, is the key point of the figure. It shows that the model does not move directly from a proposed action to a real enterprise transaction. Instead, there is a controlled boundary between recommendation and execution. That boundary prevents model output from becoming unchecked system action.

The core message of Figure 4 is straightforward: enterprise AI should let the model propose, but require the system to verify and execute. In this architecture, the agent contributes intelligence, while the enterprise stack retains control over action, policy, and accountability.

4. Trust Hierarchy Across Enterprise Data

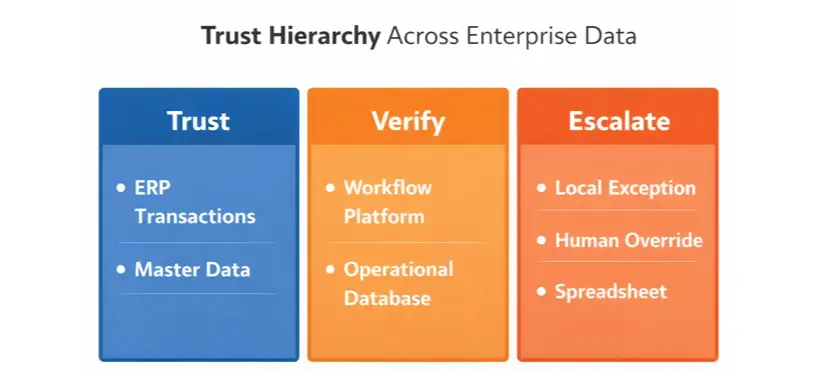

Figure 5 shows how an enterprise agent should treat different data sources before action is allowed. The three columns, Trust, Verify, and Escalate, make clear that enterprise data should not be treated as equally reliable in every situation.

In the Trust column, ERP Transactions and Master Data represent the primary records the agent should rely on first for many operational decisions. These are the systems that usually define the official business state for transactions, identities, and reference data.

In the Verify column, the Workflow Platform and Operational Database show that authoritative records alone may not be enough. Even if a transaction exists in ERP, the system may still need to confirm workflow status, process sequence, or current operational conditions before an action can proceed.

In the Escalate column, Local Exception, Human Override, and Spreadsheet represent cases where the standard system record is incomplete, inconsistent, or temporarily bypassed. These sources should not be treated as direct execution authority. Instead, they should trigger additional validation or human review.

The core message of Figure 5 is simple: the ERP-Agent Bridge must distinguish between what it can trust directly, what it must verify, and what it should escalate. That distinction is what turns raw data access into decision-grade trust.

5. Tool Design, Action Gating, and Approval Logic

Once trust hierarchy is defined, the next challenge is action control. An enterprise agent should not interact with business systems through unrestricted access or open-ended API calls. It should operate through bounded tools, explicit execution rules, and approval logic that reflect operational risk. This approach aligns with structured tool interfaces such as the Model Context Protocol, which provides a standardized way for models to interact with external tools and systems.

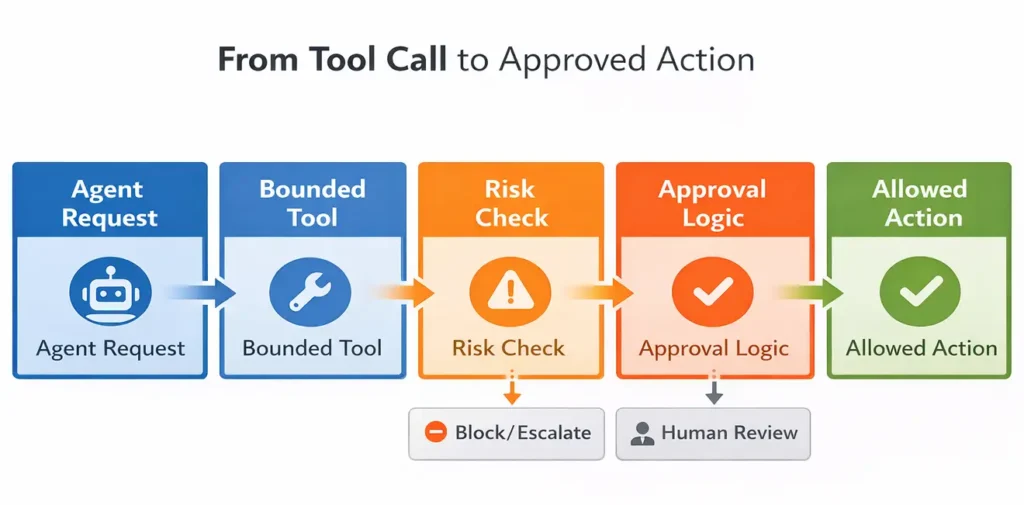

Figure 6 illustrates this control path. A proposed tool call does not become an enterprise transaction by default. It moves through a sequence of bounded execution steps that evaluate scope, risk, and approval requirements before any action is allowed to proceed.

The figure is organized as a left-to-right control flow. It begins with Agent Request, which represents the agent’s intended action. That request does not go directly to an enterprise system. It first passes through a Bounded Tool, meaning the agent can only use a predefined function rather than unrestricted system access.

The flow then moves to Risk Check, where the system evaluates whether the requested action falls within allowed thresholds. If the risk is too high or the conditions are unclear, the action can be blocked or escalated rather than executed automatically.

Next comes Approval Logic. Even if the action passes the initial risk check, it may still require additional control. Some actions can proceed automatically, while others must be routed to Human Review before approval is granted.

Only after these control steps does the flow reach Allowed Action. At that point, the requested operation has been bounded, evaluated, and approved under enterprise rules.

The core message of Figure 6 is clear: enterprise AI should not move directly from agent intent to execution. Actions should pass through bounded tools, risk checks, and approval logic before they are allowed to affect business systems.

6. Observability, Rollback, and Exception Handling

Once actions are allowed to execute, the ERP-Agent Bridge must support operational resilience. Enterprise agents should not be judged only by whether they can act, but by whether their actions can be observed, traced, reversed, and managed when conditions change or failures occur.

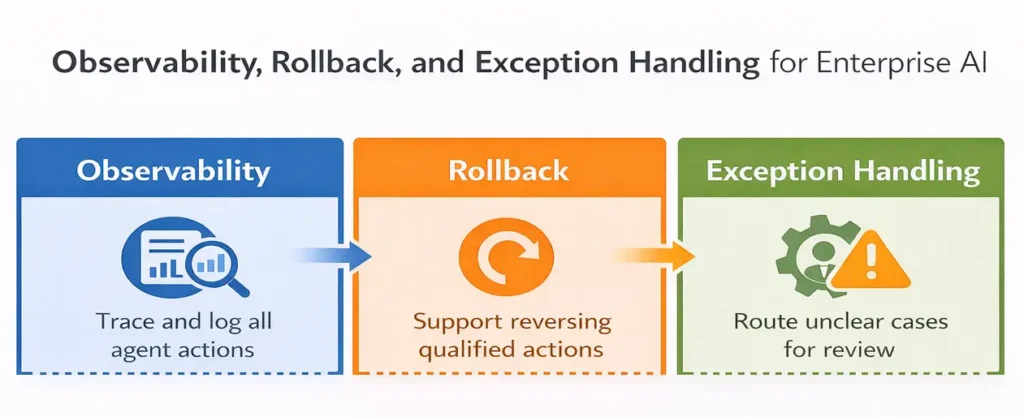

Figure 7 explains the operational safeguards that make autonomous enterprise action manageable after execution is allowed.

The figure is organized into three linked blocks: Observability, Rollback, and Exception Handling. Together, they show that enterprise AI should not stop at deciding or executing an action. It must also support traceability, recovery, and escalation when conditions change or failures occur.

In the Observability block, the message is that agent actions must be traced and logged. The system should record what the agent requested, what checks were applied, and what was finally executed. This creates the visibility needed for diagnosis, audit, and control improvement.

In the Rollback block, the figure shows that qualified actions should be reversible where possible. If an agent triggers the wrong update or acts under stale conditions, rollback mechanisms help limit downstream damage and restore the system to a safe state.

In the Exception Handling block, the figure emphasizes that unclear or out-of-policy cases should be routed for review rather than handled through guesswork. When state is incomplete, inconsistent, or ambiguous, the system should move the case into an exception path with human oversight.

The core message of Figure 7 is that enterprise AI requires more than action capability. It also requires visibility, reversibility, and controlled escalation. Those safeguards are what turn autonomous execution into a manageable operating capability.

7. Reliability and Governance for Agentic Workflows

Once observability, rollback, and exception handling are in place, the next challenge is operating discipline at scale. Enterprise AI becomes reliable only when workflows remain controlled across permissions, oversight, accountability, and system behavior.

Reliability requires repeatable control

An agentic workflow should behave predictably under both normal and abnormal conditions. The same request should follow the same validation path, approval logic, and exception route unless system state has materially changed. Without that consistency, enterprise AI remains operationally fragile.

Governance defines authority

Governance determines which actions are allowed, which require approval, who owns the policy rules, and how accountability is assigned when something goes wrong. That logic aligns closely with the NIST AI Risk Management Framework, which emphasizes structured risk management and trustworthiness across the AI lifecycle

Reliability and governance must scale together

Reliability without governance can automate the wrong behavior consistently. Governance without reliability creates rules that break in real operation. Enterprise AI needs both to keep workflows controlled, auditable, and dependable at scale.

The main point is clear: trusted autonomous execution depends on an ERP-Agent Bridge that remains reliable, governed, and accountable at scale.

8. Measuring Workflow-Level Performance

The final test of the ERP-Agent Bridge is whether the workflow performs better, not whether the model performs well alone.

Workflow outcomes matter

Model quality may show capability, but enterprise value appears only when workflow metrics improve. Useful measures include cycle time, manual touches, exception rates, rework, SLA performance, and approval delay.

Control should create measurable gains

Execution controls are not only for reducing risk. They are valuable only if they make autonomous workflows reliable enough to improve operational performance.

The main point is simple: enterprise AI creates value only when controlled execution improves the workflow itself.

Conclusion

The promise of agentic AI does not depend only on smarter models. It depends on whether enterprises can build a trusted execution layer between AI intent and business action. That is the role of the ERP-Agent Bridge.

When source of truth is defined, reasoning is separated from execution, actions are gated by policy, and workflows are supported by observability, rollback, governance, and measurable performance control, autonomous execution becomes operationally credible. In enterprise AI, the real challenge is not intelligence alone. It is engineered trust.